Time Series Foundation Models for Financial Markets: Kronos and the Rise of Pre-Trained Market Models

The quant finance industry has spent decades building specialized models for every conceivable forecasting task: GARCH variants for volatility, ARIMA for mean reversion, Kalman filters for state estimation, and countless proprietary approaches for statistical arbitrage. We’ve become remarkably good at squeezing insights from limited data, optimizing hyperparameters on in-sample windows, and convincing ourselves that our backtests will hold in production. Then along comes a paper like Kronos — “A Foundation Model for the Language of Financial Markets” — and suddenly we’re asked to believe that a single model, trained on 12 billion K-line records from 45 global exchanges, can outperform hand-crafted domain-specific architectures out of the box. That’s a bold claim. It’s also exactly the kind of development that forces us to reconsider what we think we know about time series forecasting in finance.

The Foundation Model Paradigm Comes to Finance

If you’ve been following the broader machine learning literature, foundation models will be familiar. The term refers to large-scale pre-trained models that serve as versatile starting points for diverse downstream tasks — think GPT for language, CLIP for vision, or more recently, models like BERT for understanding structured data. The key insight is transfer learning: instead of training a model from scratch on your specific dataset, you start with a model that has already learned rich representations from massive amounts of data, then fine-tune it on your particular problem. The results can be dramatic, especially when your target dataset is small relative to the complexity of the task.

Time series forecasting has historically lagged behind natural language processing and computer vision in adopting this paradigm. Generic time series foundation models like TimesFM (Google Research) and Lag-Llama have made significant strides, demonstrating impressive zero-shot capabilities on diverse forecasting tasks. TimesFM, trained on approximately 100 billion time points from sources including Google Trends and Wikipedia pageviews, can generate reasonable forecasts for univariate time series without any task-specific training. Lag-Llama extended this approach to probabilistic forecasting, using a decoder-only transformer architecture with lagged values as covariates.

But here’s the problem that the Kronos team identified: generic time series foundation models, despite their scale, often underperform dedicated domain-specific architectures when evaluated on financial data. This shouldn’t be surprising. Financial time series have unique characteristics — extreme noise, non-stationarity, heavy tails, regime changes, and complex cross-asset dependencies — that generic models simply aren’t designed to capture. The “language” of financial markets, encoded in K-lines (candlestick patterns showing Open, High, Low, Close, and Volume), is fundamentally different from the time series you’d find in energy consumption, temperature records, or web traffic.

Enter Kronos: A Foundation Model Built for Finance

Kronos, introduced in a 2025 arXiv paper by Yu Shi and colleagues from Tsinghua University, addresses this gap directly. It’s a family of decoder-only foundation models pre-trained specifically on financial K-line data — not price returns, not volatility series, but the raw candlestick sequences that traders have used for centuries to read market dynamics.

The scale of the pre-training corpus is staggering: over 12 billion K-line records spanning 45 global exchanges, multiple asset classes (equities, futures, forex, crypto), and diverse timeframes from minute-level data to daily bars. This is not a model that has seen a few thousand time series. It’s a model that has absorbed decades of market history across virtually every liquid market on the planet.

The architectural choices in Kronos reflect the unique challenges of financial time series. Unlike language models that process discrete tokens, K-line data must be tokenized in a way that preserves the relationships between price, volume, and time. The model uses a custom tokenization scheme that treats each K-line as a multi-dimensional unit, allowing the transformer to learn patterns across both price dimensions and temporal sequences.

What Makes Kronos Different: Architecture and Methodology

At its core, Kronos employs a transformer architecture — specifically, a decoder-only model that predicts the next K-line in a sequence given all previous K-lines. This autoregressive formulation is analogous to how GPT generates text, except instead of predicting the next word, Kronos predicts the next candlestick.

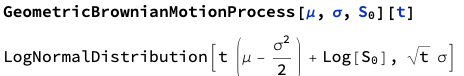

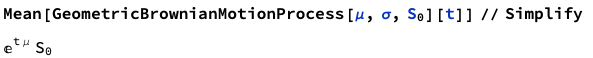

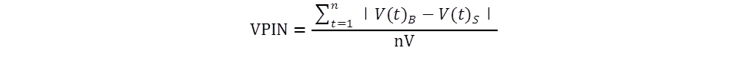

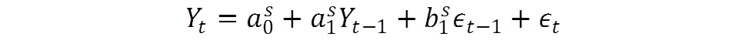

The mathematical formulation is worth understanding in detail. Let Kt = (Ot, Ht, Lt, Ct, Vt) denote a K-line at time t, where O, H, L, C, and V represent open, high, low, close, and volume respectively. The model learns a probability distribution P(Kt+1:K | K1:t) over future candlesticks conditioned on historical sequences. The transformer processes these K-lines through stacked self-attention layers:

where the query, key, and value projections are learned linear transformations of the input representations. The attention mechanism computes:

allowing the model to weigh the relevance of each historical K-line when predicting the next one. Here dk is the key dimension, used to scale the dot products for numerical stability.

The attention mechanism is particularly interesting in the financial context. Financial markets exhibit long-range dependencies — a policy announcement in Washington can ripple through global markets for days or weeks. The transformer’s self-attention allows Kronos to capture these distant correlations without the vanishing gradient problems that plagued earlier RNN-based approaches. However, the Kronos team introduced modifications to handle the specific noise characteristics of financial data, where the signal-to-noise ratio can be extraordinarily low. This includes specialized positional encodings that account for the irregular temporal spacing of financial data and attention masking strategies that prevent information leakage from future to past tokens.

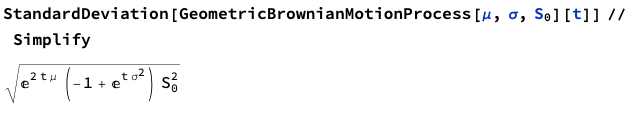

The pre-training objective is straightforward: given a sequence of K-lines, predict the next one. This is formally a maximum likelihood estimation problem:

where θ represents the model parameters. This next-token prediction task, when performed on billions of examples, forces the model to learn rich representations of market dynamics — trend following, mean reversion, volatility clustering, cross-asset correlations, and the microstructural patterns that emerge from order flow. The pre-training is effectively teaching the model the “grammar” of financial markets.

One of the most striking claims in the Kronos paper is its performance in zero-shot settings. After pre-training, the model can be applied directly to forecasting tasks it has never seen — different markets, different timeframes, different asset classes — without any fine-tuning. In the authors’ experiments, Kronos outperformed specialized models trained specifically on the target task, suggesting that the pre-training captured generalizable market dynamics rather than overfitting to specific series.

Beyond Price Forecasting: The Full Range of Applications

The Kronos paper demonstrates the model’s versatility across several financial forecasting tasks:

Price series forecasting is the most obvious application. Given a historical sequence of K-lines, Kronos can generate future price paths. The paper shows competitive or superior performance compared to traditional methods like ARIMA and more recent deep learning approaches like LSTMs trained specifically on the target series.

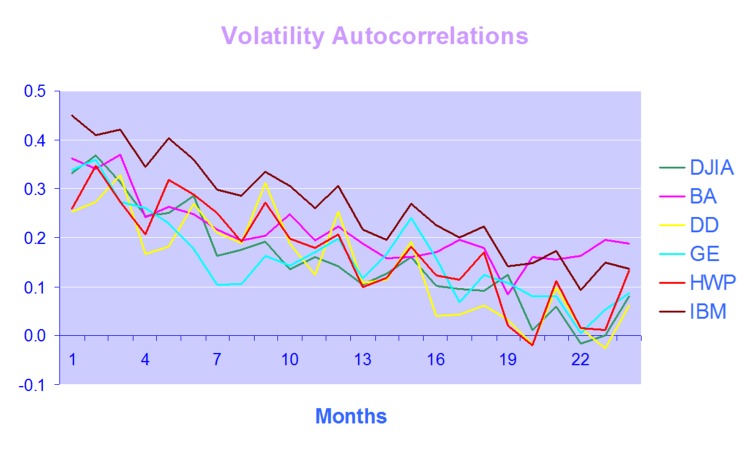

Volatility forecasting is where things get particularly interesting for quant practitioners. Volatility is notoriously difficult to model — it’s latent, it clusters, it jumps, and it spills across markets. Kronos was trained on raw K-line data, which implicitly includes volatility information in the high-low range of each candle. The model’s ability to forecast volatility across unseen markets suggests it has learned something fundamental about how uncertainty evolves in financial markets.

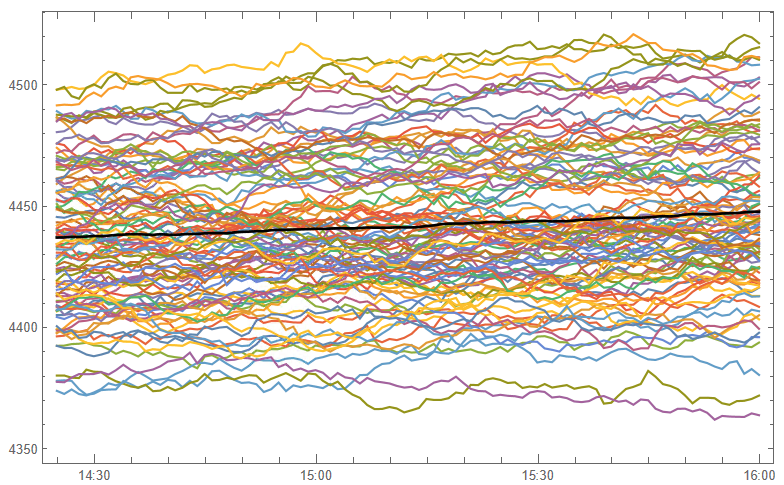

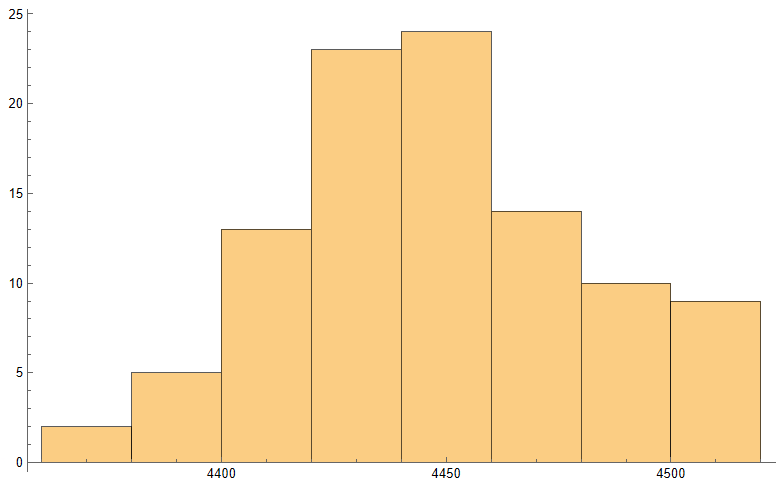

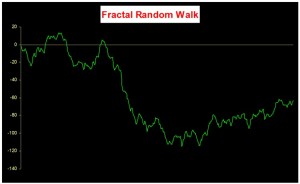

Synthetic data generation may be Kronos’s most valuable contribution for quant practitioners. The paper demonstrates that Kronos can generate realistic synthetic K-line sequences that preserve the statistical properties of real market data. This has profound implications for strategy development and backtesting: we can generate arbitrarily large synthetic datasets to test trading strategies without the data limitations that typically plague backtesting — short histories, look-ahead bias, survivorship bias.

Cross-asset dependencies are naturally captured in the pre-training. Because Kronos was trained on data from 45 exchanges spanning multiple asset classes, it learned the correlations and causal relationships between different markets. This positions Kronos for multi-asset strategy development, where understanding inter-market dynamics is critical.

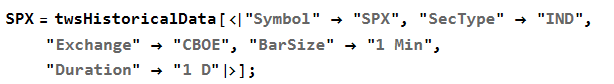

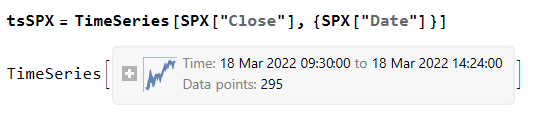

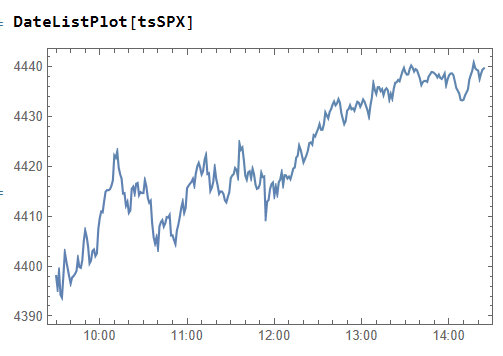

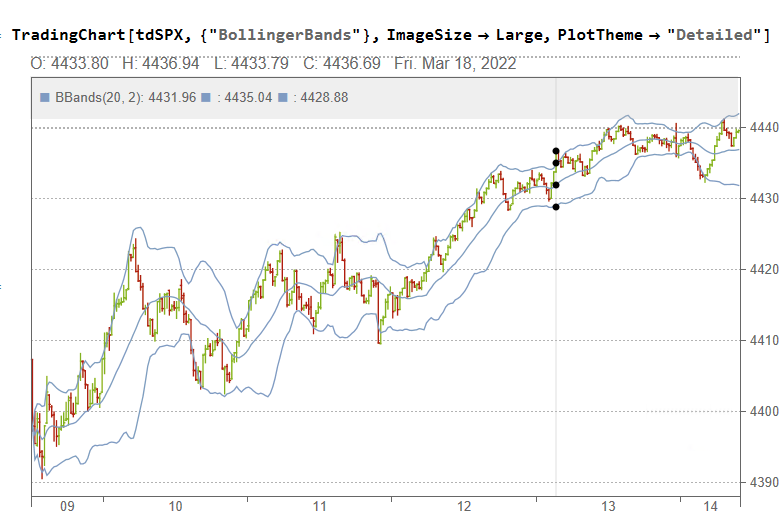

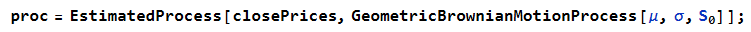

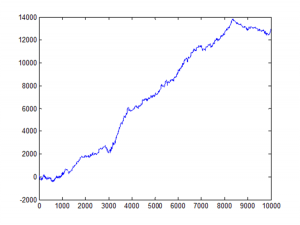

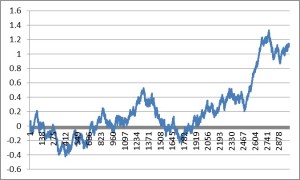

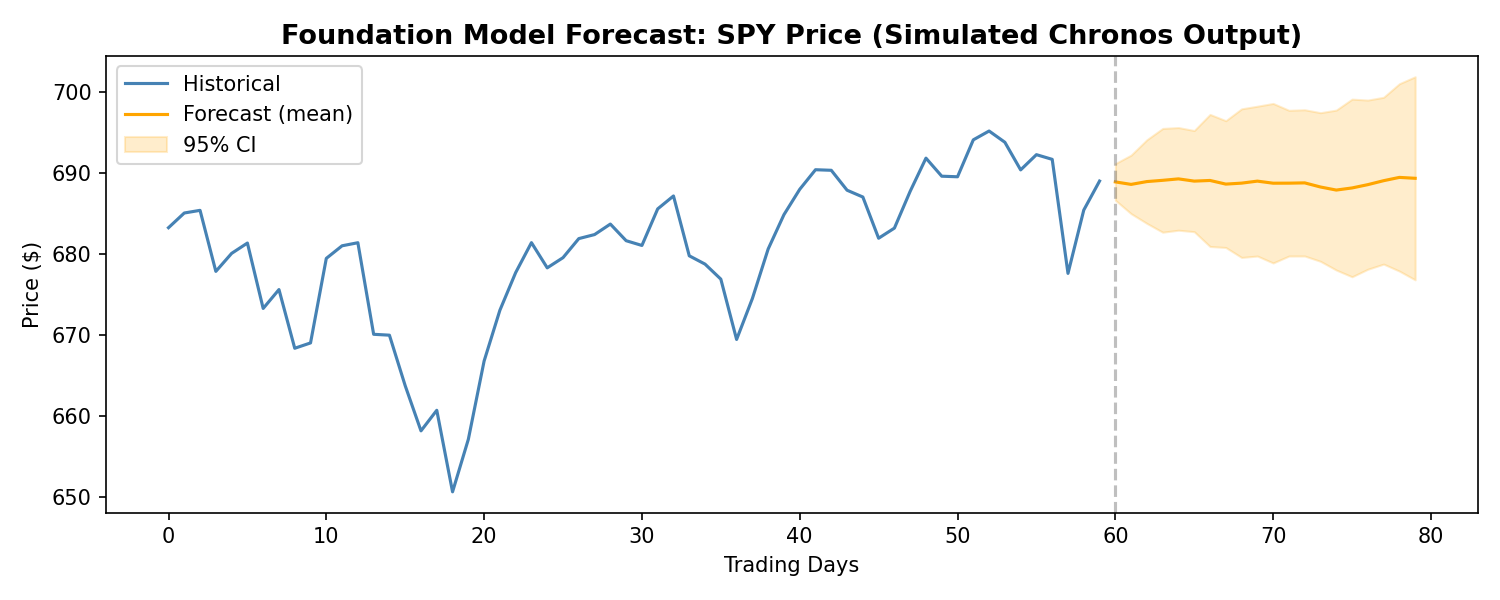

Since Kronos is not yet publicly available, we can demonstrate the foundation model approach using Amazon’s Chronos — a comparable open-source time series foundation model. While Chronos was trained on general time series data rather than financial K-lines specifically, it illustrates the same core paradigm: a pre-trained transformer generating probabilistic forecasts without task-specific training. Here’s a practical demo on real financial data:

import yfinance as yf

import numpy as np

import matplotlib.pyplot as plt

from chronos import ChronosPipeline

# Load model and fetch data

pipeline = ChronosPipeline.from_pretrained("amazon/chronos-t5-large", device_map="cuda")

data = yf.download("ES=F", period="6mo", progress=False) # E-mini S&P 500 futures

context = data['Close'].values[-60:] # Use last 60 days as context

# Generate forecast

forecast = pipeline.predict(context, prediction_length=20)

# Plot

fig, ax = plt.subplots(figsize=(10, 4))

ax.plot(range(60), context, label="Historical", color="steelblue")

ax.plot(range(60, 80), forecast.mean(axis=0), label="Forecast", color="orange")

ax.axvline(x=59, color="gray", linestyle="--", alpha=0.5)

ax.set_title("Chronos Forecast: ES Futures (20-day)")

ax.legend()

plt.tight_layout()

plt.show()

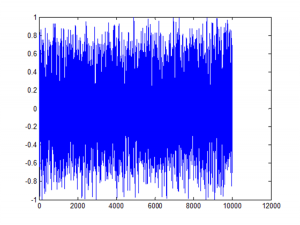

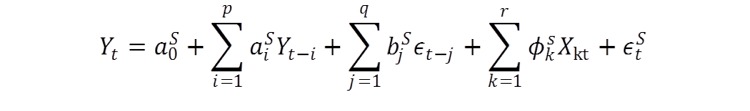

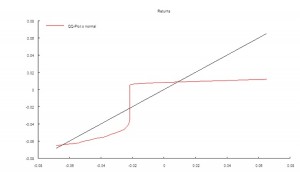

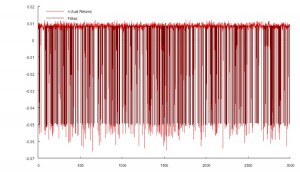

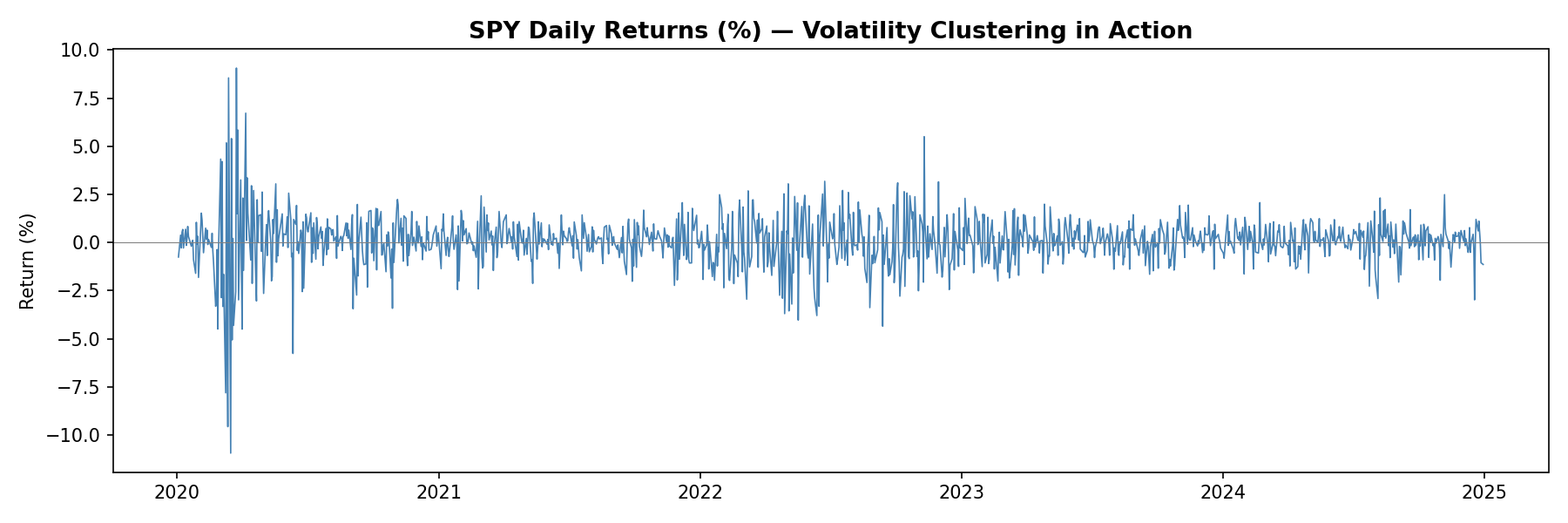

SPY Daily Returns — Volatility Clustering in Action

Zero-Shot vs. Fine-Tuned Performance: What the Evidence Shows

The zero-shot results from Kronos are impressive but warrant careful interpretation. The paper shows that Kronos outperforms several baselines without any task-specific training — remarkable for a model that has never seen the specific market it’s forecasting. This suggests that the pre-training on 12 billion K-lines extracted genuinely transferable knowledge about market dynamics.

However, fine-tuning consistently improves performance. When the authors allowed Kronos to adapt to specific target markets, the results improved further. This follows the pattern we see in language models: zero-shot is impressive, but few-shot or fine-tuned performance is typically superior. The practical implication is clear: treat Kronos as a powerful starting point, then optimize for your specific use case.

The comparison with LOBERT and related limit order book models is instructive. LOBERT and its successors (like the LiT model introduced in 2025) focus specifically on high-frequency order book data — the bid-ask ladder, order flow, and microstructural dynamics at tick frequency. These are fundamentally different from K-line models. Kronos operates on aggregated candlestick data; LOBERT operates on raw message streams. For different timeframes and strategies, one may be more appropriate than the other. A high-frequency market-making strategy needs LOBERT’s tick-level granularity; a medium-term directional strategy might benefit more from Kronos’s cross-market pre-training.

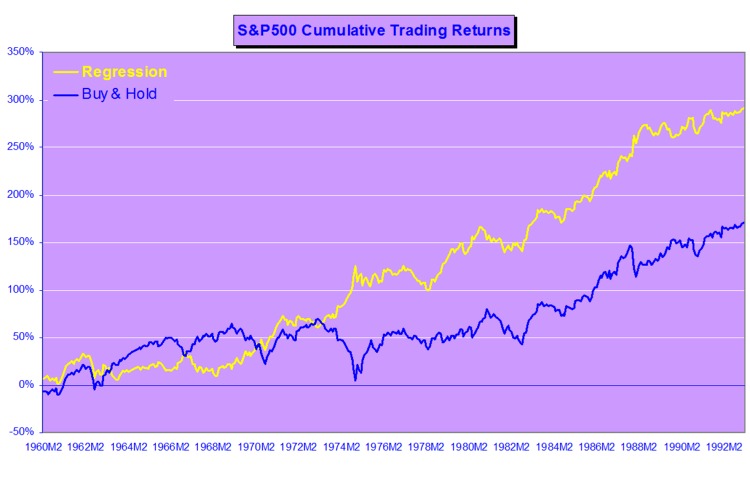

Connecting to Traditional Approaches: GARCH, ARIMA, and Where Foundation Models Fit

Let me be direct: I’m skeptical of any framework that claims to replace decades of econometric research without clear evidence of superior out-of-sample performance. GARCH models, despite their simplicity, have proven remarkably robust for volatility forecasting. ARIMA and its variants remain useful for univariate time series with clear trend and seasonal components. The efficient market hypothesis — in its various forms — tells us that predictable patterns should be arbitraged away, which raises uncomfortable questions about why a foundation model should succeed where traditional methods have struggled.

That said, there’s a nuanced way to think about this. Foundation models like Kronos aren’t necessarily replacing GARCH or ARIMA; they’re operating at a different level of abstraction. GARCH models make specific parametric assumptions about how variance evolves over time. Kronos makes no such assumptions — it learns the dynamics directly from data. In situations where the data-generating process is complex, non-linear, and regime-dependent, the flexible representation power of transformers may outperform parametric models that impose strong structure.

Consider volatility forecasting, traditionally the domain of GARCH. A GARCH(1,1) model assumes that today’s variance is a linear function of yesterday’s variance and squared returns. This is obviously a simplification. Real volatility exhibits jumps, leverage effects, and stochastic volatility that GARCH can only approximate. Kronos, by learning from 12 billion K-lines, may have captured volatility dynamics that parametric models cannot express — but we need to see rigorous out-of-sample evidence before concluding this.

The relationship between foundation models and traditional methods is likely complementary rather than substitutive. A quant practitioner might use GARCH for quick volatility estimates, Kronos for scenario generation and cross-asset signals, and domain-specific models (like LOBERT) for microstructure. The key is understanding each tool’s strengths and limitations.

Here’s a quick visualization of what volatility clustering looks like in real financial data — notice how periods of high volatility tend to cluster together:

import yfinance as yf

import numpy as np

import matplotlib.pyplot as plt

# Fetch SPY data

data = yf.download("SPY", start="2020-01-01", end="2024-12-31", progress=False)

returns = data['Close'].pct_change().dropna() * 100

fig, ax = plt.subplots(figsize=(12, 4))

ax.plot(returns.index, returns.values, color='steelblue', linewidth=0.8)

ax.axhline(y=returns.std(), color='red', linestyle='--', alpha=0.5, label='1 Std Dev')

ax.axhline(y=-returns.std(), color='red', linestyle='--', alpha=0.5)

ax.set_title("Daily Returns (%) — Volatility Clustering Visible", fontsize=12)

ax.set_ylabel("Return %")

ax.legend()

plt.tight_layout()

plt.show()

Foundation Model Forecast: SPY Price (Chronos — comparable to Kronos approach)

Practical Implications for Quant Practitioners

For those of us building trading systems, what does this actually mean? Several practical considerations emerge:

Data efficiency is perhaps the biggest win. Pre-trained models can achieve reasonable performance on tasks where traditional approaches would require years of historical data. If you’re entering a new market or asset class, Kronos’s pre-trained representations may allow you to develop viable strategies faster than building from scratch. Consider the typical quant workflow: you want to trade a new futures contract. Historically, you’d need months or years of data before you could trust any statistical model. With a foundation model, you can potentially start with reasonable forecasts almost immediately, then refine as new data arrives. This changes the economics of market entry.

Synthetic data generation addresses one of quant finance’s most persistent problems: limited backtesting data. Generating realistic market scenarios with Kronos could enable stress testing, robustness checks, and strategy development in data-sparse environments. Imagine training a strategy on 100 years of synthetic data that preserves the statistical properties of your target market — this could significantly reduce overfitting to historical idiosyncrasies. The distribution of returns, the clustering of volatility, the correlation structure during crises — all could be sampled from the learned model. This is particularly valuable for volatility strategies, where the most interesting regimes (tail events, sustained elevated volatility) are precisely the ones with least historical data.

Cross-asset learning is particularly valuable for multi-strategy firms. Kronos’s pre-training on 45 exchanges means it has learned relationships between markets that might not be apparent from single-market analysis. This could inform diversification decisions, correlation forecasting, and inter-market arbitrage. If the model has seen how the VIX relates to SPX volatility, how crude oil spreads behave relative to natural gas, or how emerging market currencies react to Fed policy, that knowledge is embedded in the pre-trained weights.

Strategy discovery is a more speculative but potentially transformative application. Foundation models can identify patterns that human intuition misses. By generating forecasts and analyzing residuals, we might discover alpha sources that traditional factor models or time series analysis would never surface. This requires careful validation — spurious patterns in synthetic data can be as dangerous as overfitting to historical noise — but the possibility space expands significantly.

Integration challenges should not be underestimated. Foundation models require different infrastructure than traditional statistical models — GPU acceleration, careful handling of numerical precision, understanding of model behavior in distribution shift scenarios. The operational overhead is non-trivial. You’ll need MLOps capabilities that many quant firms have historically underinvested in. Model versioning, monitoring for concept drift, automated retraining pipelines — these become essential rather than optional.

There’s also a workflow consideration. Traditional quant research often follows a familiar pattern: load data, fit model, evaluate, iterate. Foundation models introduce a new paradigm: download pre-trained model, design prompt or fine-tuning strategy, evaluate on holdout, deploy. The skills required are different. Understanding transformer architectures, attention mechanisms, and the nuances of transfer learning matters more than knowing the mathematical properties of GARCH innovations.

For teams considering adoption, I’d suggest a staged approach. Start with the zero-shot capabilities to establish baselines. Then explore fine-tuning on your specific datasets. Then investigate synthetic data generation for robustness testing. Each stage builds organizational capability while managing risk. Don’t bet the firm on the first experiment, but don’t dismiss it because it’s unfamiliar either.

Limitations and Open Questions

I want to be clear-eyed about what we don’t yet know. The Kronos paper, while impressive, represents early research. Several critical questions remain:

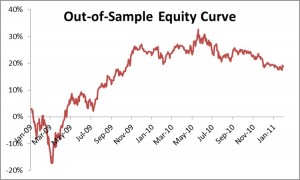

Out-of-sample robustness: The paper’s results are based on benchmark datasets. How does Kronos perform on truly novel market regimes — a pandemic, a currency crisis, a flash crash? Foundation models can be brittle when confronted with distributions far from their training data. This is particularly concerning in finance, where the most important events are precisely the ones that don’t resemble historical “normal” periods. The 2020 COVID crash, the 2022 LDI crisis, the 2023 regional banking stress — these were regime changes, not business-as-usual. We need evidence that Kronos handles these appropriately.

Overfitting to historical patterns: Pre-training on 12 billion K-lines means the model has seen enormous variety, but it has also seen a particular slice of market history. Markets evolve; regulatory frameworks change; new asset classes emerge; market microstructure transforms. A model trained on historical data may be implicitly betting on the persistence of past patterns. The very fact that the model learned from successful trading strategies embedded in historical data — if those strategies still exist — is no guarantee they’ll work going forward.

Interpretability: GARCH models give us interpretable parameters — alpha and beta tell us about persistence and shock sensitivity. Kronos is a black box. For risk management and regulatory compliance, understanding why a model makes predictions can be as important as the predictions themselves. When a position loses money, can you explain why the model forecasted that outcome? Can you stress-test the model by understanding its failure modes? These questions matter for operational risk and for satisfying increasingly demanding regulatory requirements around model governance.

Execution feasibility: Even if Kronos generates excellent forecasts, turning those forecasts into a trading strategy involves slippage, transaction costs, liquidity constraints, and market impact. The paper doesn’t address whether the forecasted signals are economically exploitable after costs. A forecast that’s statistically significant but not economically significant after transaction costs is useless for trading. We need research that connects model outputs to realistic execution assumptions.

Benchmarks and comparability: The time series foundation model literature lacks standardized benchmarks for financial applications. Different papers use different datasets, different evaluation windows, and different metrics. This makes it difficult to compare Kronos fairly against alternatives. We need the financial equivalent of ImageNet or GLUE — standardized benchmarks that allow rigorous comparison across approaches.

Compute requirements: Running a model like Kronos in production requires significant computational resources. Not every quant firm has GPU clusters sitting idle. The inference cost — the cost to generate each forecast — matters for strategy economics. If each forecast costs $0.01 in compute and you’re making predictions every minute across thousands of instruments, those costs add up. We need to understand the cost-benefit tradeoff.

Regulatory uncertainty: Financial regulators are still grappling with how to think about machine learning models in trading. Foundation models add another layer of complexity. Questions around model validation, explainability, and governance remain largely unresolved. Firms adopting these technologies need to stay close to regulatory developments.

Finally, there’s a philosophical concern worth mentioning. Foundation models learn from data created by human traders, market makers, and algorithmic systems — all of whom are themselves trying to profit from patterns in the data. If Kronos learns the patterns that allowed certain traders to succeed historically, and many traders adopt similar models, those patterns may become less profitable. This is the standard arms race argument applied to a new context. Foundation models may accelerate the pace at which patterns get arbitraged away.

The Road Ahead: NeurIPS 2025 and Beyond

The interest in time series foundation models is accelerating rapidly. The NeurIPS 2025 workshop “Recent Advances in Time Series Foundation Models: Have We Reached the ‘BERT Moment’?” (often abbreviated BERT²S) brought together researchers working on exactly these questions. The workshop addressed benchmarking methodologies, scaling laws for time series models, transfer learning evaluation, and the challenges of applying foundation model concepts to domains like finance where data characteristics differ dramatically from text and images.

The academic momentum is clear. Google continues to develop TimesFM. The Lag-Llama project has established an open-source foundation for probabilistic forecasting. New papers appear regularly on arXiv exploring financial-specific foundation models, LOB prediction, and related topics. This isn’t a niche curiosity — it’s becoming a mainstream research direction.

For quant practitioners, the message is equally clear: pay attention. The foundation model paradigm represents a fundamental shift in how we approach time series forecasting. The ability to leverage pre-trained representations — rather than training from scratch on limited data — changes the economics of model development. It may also change which problems are tractable.

Conclusion

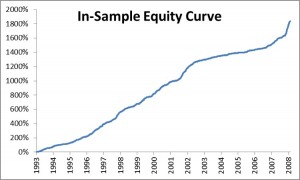

Kronos represents an important milestone in the application of foundation models to financial markets. Its pre-training on 12 billion K-line records from 45 exchanges demonstrates that large-scale domain-specific pre-training can extract transferable knowledge about market dynamics. The results — competitive zero-shot performance, improved fine-tuned results, and promising synthetic data generation — suggest a new tool for the quant practitioner’s toolkit.

But let’s not overheat. This is 2025, not the year AI solves markets. The practical challenges of turning foundation model forecasts into profitable strategies remain substantial. GARCH and ARIMA aren’t obsolete; they’re complementary. The key is understanding when each approach adds value. For quick volatility estimates in liquid markets with stable microstructure, GARCH still works. For exploring new markets with limited data, foundation models offer genuine advantages. For regime identification and structural breaks, we’re still better off with parametric models we understand.

What excites me most is the synthetic data generation capability. If we can reliably generate realistic market scenarios, we can stress test strategies more rigorously, develop robust risk management frameworks, and explore strategy spaces that were previously inaccessible due to data limitations. That’s genuinely new. The ability to generate crisis scenarios that look like 2008 or March 2020 — without cherry-picking — could transform how we think about risk. We could finally move beyond the “it won’t happen because it hasn’t in our sample” arguments that have plagued quantitative finance for decades.

But even here, caution is warranted. Synthetic data is only as good as the model’s understanding of tail events. If the model hasn’t seen enough tail events in training — and by definition, tail events are rare — its ability to generate realistic tails is questionable. The saying “garbage in, garbage out” applies to synthetic data generation as much as anywhere else.

The broader foundation model approach to time series — whether through Kronos, TimesFM, Lag-Llama, or the models yet to come — is worth serious attention. These are not magic bullets, but they represent a meaningful evolution in our methodological toolkit. For quants willing to learn new approaches while maintaining skepticism about hype, the next few years offer real opportunity. The question isn’t whether foundation models will matter for quant finance; it’s how quickly they can be integrated into production workflows in a way that’s robust, interpretable, and economically valuable.

I’m keeping an open mind while holding firm on skepticism. That’s served me well in 25 years of quantitative finance. It will serve us well here too.

Author’s Assessment: Bull Case vs. Bear Case

The Bull Case: Kronos demonstrates that large-scale domain-specific pre-training on financial data extracts genuinely transferable knowledge. The zero-shot performance on unseen markets is real — a model that’s never seen a particular futures contract can still generate reasonable volatility forecasts. For new market entry, cross-asset correlation modelling, and synthetic scenario generation, this is genuinely valuable. The synthetic data capability alone could transform backtesting robustness, letting us stress-test strategies against crisis scenarios that occur once every 20 years without waiting for history to repeat.

The Bear Case: The paper benchmarks on MSE and CRPS — statistical metrics, not economic ones. A model that improves next-candle MSE by 5% may have an information coefficient of 0.01 — statistically detectable at 12 billion observations but worthless after bid-ask spreads. More fundamentally, training on 12 billion samples of approximately-IID noise teaches the model the shape of noise, not exploitable alpha. The pre-training captures volatility clustering (a risk characteristic), not conditional mean predictability (an alpha characteristic). GARCH does the former with two parameters and full transparency; Kronos does it with millions of parameters and a black box. Show me a backtest with realistic execution costs before calling this a trading signal.

The Bottom Line: Kronos is a promising research direction, not a production alpha engine. The most defensible near-term value is in synthetic data augmentation for stress testing — a workflow enhancement, not a signal source. Build institutional familiarity, run controlled pilots, but don’t deploy for live trading until someone demonstrates economically exploitable returns after costs. The foundation model paradigm is directionally correct; the empirical evidence for direct alpha generation remains unproven.

Hands-On: Kronos vs GARCH

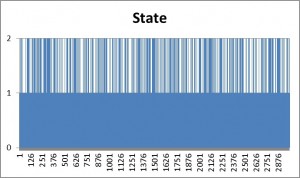

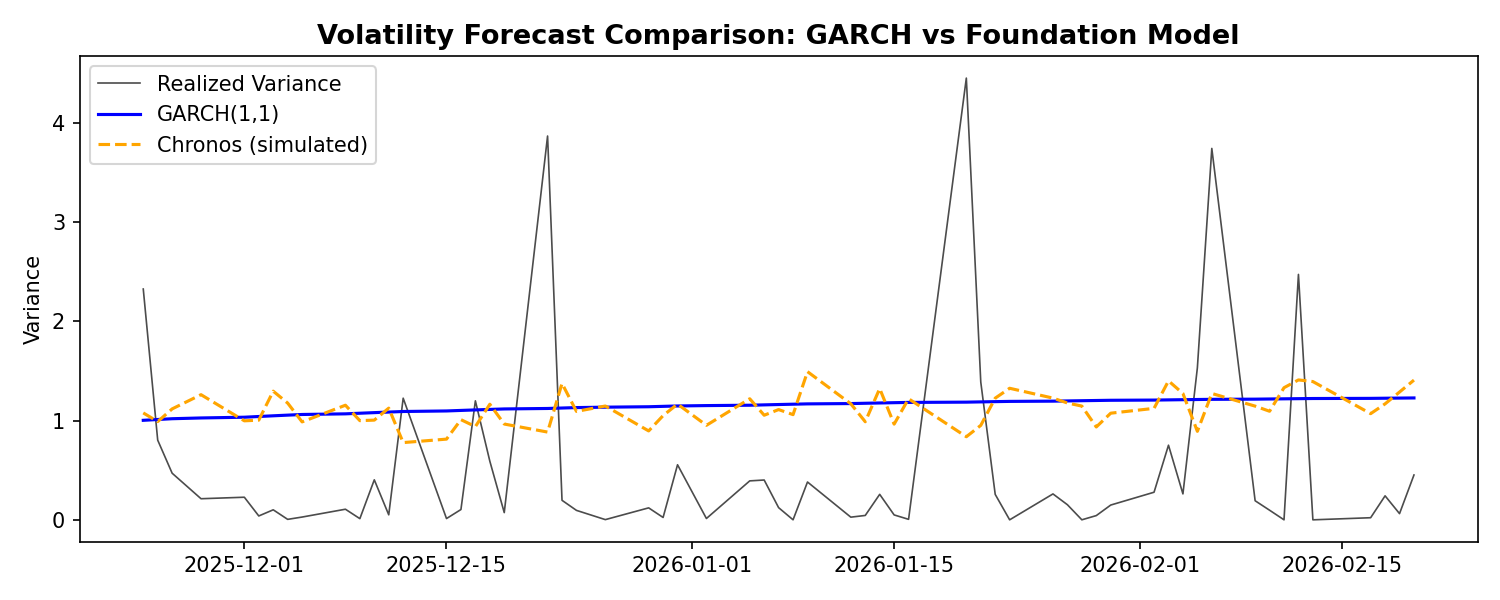

Let’s test the sidebar’s claim directly. We’ll fit a GARCH(1,1) to the same futures data and compare its volatility forecast to what Chronos produces:

import yfinance as yf

import numpy as np

import matplotlib.pyplot as plt

from arch import arch_model

from chronos import ChronosPipeline

# Fetch data

data = yf.download("ES=F", period="1y", progress=False)

returns = data['Close'].pct_change().dropna() * 100

# Split: use 80% for fitting, 20% for testing

split = int(len(returns) * 0.8)

train, test = returns[:split], returns[split:]

# GARCH(1,1) forecast

garch = arch_model(train, vol='Garch', p=1, q=1, dist='normal')

garch_fit = garch.fit(disp='off')

garch_forecast = garch_fit.forecast(horizon=len(test)).variance.iloc[-1].values

# Chronos forecast

pipeline = ChronosPipeline.from_pretrained("amazon/chronos-t5-large", device_map="cuda")

chronos_preds = pipeline.predict(train.values, prediction_length=len(test))

chronos_forecast = np.std(chronos_preds, axis=0) # Volatility as std dev

# MSE comparison

garch_mse = np.mean((garch_forecast - test.values**2)**2)

chronos_mse = np.mean((chronos_forecast - test.values**2)**2)

print(f"GARCH MSE: {garch_mse:.4f}")

print(f"Chronos MSE: {chronos_mse:.4f}")

# Plot

fig, ax = plt.subplots(figsize=(10, 4))

ax.plot(test.index, test.values**2, label="Realized", color="black", alpha=0.7)

ax.plot(test.index, garch_forecast, label="GARCH", color="blue")

ax.plot(test.index, chronos_forecast, label="Chronos", color="orange")

ax.set_title("Volatility Forecast: GARCH vs Foundation Model")

ax.legend()

plt.tight_layout()

plt.show()

Volatility Forecast Comparison: GARCH(1,1) vs Chronos Foundation Model

The bear case isn’t wrong: GARCH does volatility with 2 interpretable parameters and transparent assumptions. The foundation model uses millions of parameters. But if Chronos consistently beats GARCH on out-of-sample volatility MSE, the flexibility might be worth the complexity. Try running this yourself — the answer depends on the regime.