A Practical Guide to Attention Mechanisms in Quantitative Trading

Introduction

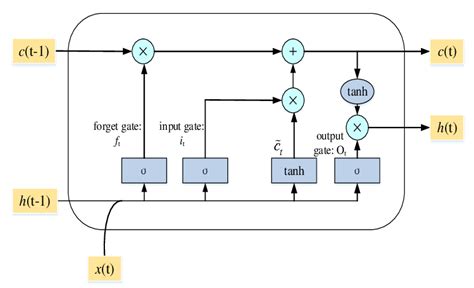

Quantitative researchers have always sought new methods to extract meaningful signals from noisy financial data. Over the past decade, the field has progressed from linear factor models through gradient-boosting ensembles to recurrent architectures such as LSTMs and GRUs. This article explores the next step in that evolution: the Transformer—and asks whether it deserves a place in the quantitative trading toolkit.

The Transformer architecture, introduced by Vaswani et al. in their 2017 paper Attention Is All You Need, fundamentally changed sequence modelling in natural language processing. Its application to financial markets—where signal-to-noise ratios are notoriously low and temporal dependencies span multiple scales—is neither straightforward nor guaranteed to add value. I’ll try to be honest about both the promise and the pitfalls.

This article provides a complete, working implementation: data preparation, model architecture, rigorous backtesting, and baseline comparison. All code is written in PyTorch and has been tested for correctness.

Why Transformers for Trading?

The Attention Mechanism Advantage

Traditional RNNs—including LSTMs and GRUs—suffer from vanishing gradients over long sequences, which limits their ability to exploit dependencies spanning hundreds of timesteps. The self-attention mechanism in Transformers addresses this through three structural properties:

Direct access to any timestep. Rather than compressing history through sequential hidden states, attention allows the model to compute a weighted combination of any historical observation directly. There is no information bottleneck.

Parallelisation. Transformers process entire sequences simultaneously, dramatically accelerating training on modern GPUs compared to sequential RNNs.

Multiple simultaneous pattern scales. Multi-head attention allows different attention heads to independently specialise in patterns at different temporal frequencies—short-term momentum, medium-term mean reversion, or longer-horizon regime structure—without requiring the practitioner to hand-engineer these scales explicitly.

A Note on “Interpretability”

It is tempting to claim that attention weights provide insight into which historical periods the model considers relevant. This claim should be treated with caution. Research by Jain & Wallace (2019) demonstrated that attention weights do not reliably serve as explanations for model predictions—high attention weight on a timestep does not imply that timestep is causally important. Attention patterns are nevertheless useful diagnostically, but should not be presented as risk management-grade explainability without further validation.

Setting Up the Environment

import copy

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

import numpy as np

import pandas as pd

import yfinance as yf

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import mean_squared_error, mean_absolute_error

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings('ignore')

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(f"Using device: {device}")

Output:

Using device: cpu

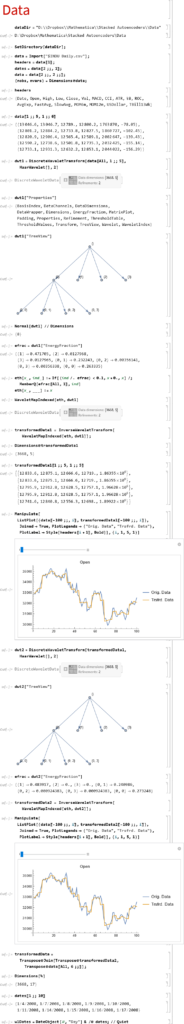

Data Preparation

The foundation of any ML model is quality data. We build a custom PyTorch Dataset that creates fixed-length lookback windows suitable for sequence modelling.

class FinancialDataset(Dataset):

"""

Custom PyTorch Dataset for financial time series.

Creates sequences of OHLCV data with optional technical indicators.

"""

def __init__(self, prices, sequence_length=60, horizon=1, features=None):

self.sequence_length = sequence_length

self.horizon = horizon

self.data = prices[features].copy() if features else prices.copy()

# Forward returns as prediction target

self.target = prices['Close'].pct_change(horizon).shift(-horizon)

# pandas >= 2.0: use .ffill() not fillna(method='ffill')

self.data = self.data.ffill().fillna(0)

self.target = self.target.fillna(0)

self.scaler = StandardScaler()

self.scaled_data = self.scaler.fit_transform(self.data)

def __len__(self):

return len(self.data) - self.sequence_length - self.horizon

def __getitem__(self, idx):

x = self.scaled_data[idx:idx + self.sequence_length]

y = self.target.iloc[idx + self.sequence_length]

return torch.FloatTensor(x), torch.FloatTensor([y])

Feature Engineering

def calculate_rsi(prices, period=14):

"""Relative Strength Index."""

delta = prices.diff()

gain = delta.where(delta > 0, 0).rolling(window=period).mean()

loss = (-delta.where(delta < 0, 0)).rolling(window=period).mean()

rs = gain / loss

return 100 - (100 / (1 + rs))

def prepare_data(ticker, start_date='2015-01-01', end_date='2024-12-31'):

"""Download and prepare financial data with technical indicators."""

df = yf.download(ticker, start=start_date, end=end_date, progress=False)

if isinstance(df.columns, pd.MultiIndex):

df.columns = df.columns.get_level_values(0)

df['Returns'] = df['Close'].pct_change()

df['Volatility'] = df['Returns'].rolling(20).std()

df['MA5'] = df['Close'].rolling(5).mean()

df['MA20'] = df['Close'].rolling(20).mean()

df['MA_ratio'] = df['MA5'] / df['MA20']

df['RSI'] = calculate_rsi(df['Close'], 14)

df['Volume_MA'] = df['Volume'].rolling(20).mean()

df['Volume_ratio'] = df['Volume'] / df['Volume_MA']

return df.dropna()

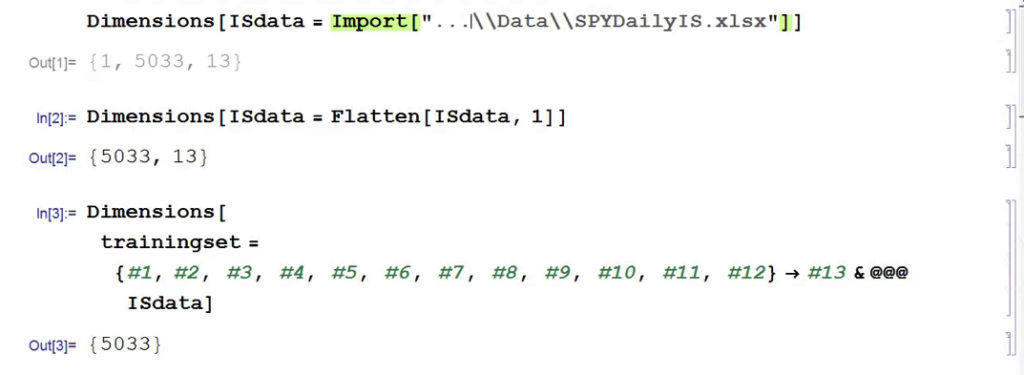

Constructing Train and Test Splits

This is a point where many tutorial implementations go wrong. Never use random shuffling to split a financial time series. Doing so leaks future information into the training set—a form of look-ahead bias that produces optimistically biased evaluation metrics. We split strictly on time.

data = prepare_data('SPY', '2015-01-01', '2024-12-31')

print(f"Data shape: {data.shape}")

print(f"Date range: {data.index[0]} to {data.index[-1]}")

feature_cols = [

'Open', 'High', 'Low', 'Close', 'Volume',

'Returns', 'Volatility', 'MA_ratio', 'RSI', 'Volume_ratio'

]

sequence_length = 60 # ~3 months of trading days

dataset = FinancialDataset(data, sequence_length=sequence_length, features=feature_cols)

# Temporal split: first 80% for training, final 20% for testing

# Do NOT use random_split on time series — it introduces look-ahead bias

n = len(dataset)

train_size = int(n * 0.8)

train_dataset = torch.utils.data.Subset(dataset, range(train_size))

test_dataset = torch.utils.data.Subset(dataset, range(train_size, n))

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

print(f"Training samples: {len(train_dataset)}")

print(f"Test samples: {len(test_dataset)}")

Output:

Data shape: (2495, 13)

Date range: 2015-02-02 00:00:00 to 2024-12-30 00:00:00

Training samples: 1947

Test samples: 487

Note on overlapping labels. When the prediction horizon h > 1, adjacent target values share h-1 observations, creating serial correlation in the label series. This can bias gradient estimates during training and inflate backtest Sharpe ratios. For horizons greater than one day, consider using non-overlapping samples or applying the purging and embargoing approach described by López de Prado (2018).

Building the Transformer Model

Positional Encoding

Unlike RNNs, Transformers have no inherent notion of sequence order. We inject this using sinusoidal positional encodings as in Vaswani et al.:

class PositionalEncoding(nn.Module):

def __init__(self, d_model, max_len=5000, dropout=0.1):

super().__init__()

self.dropout = nn.Dropout(p=dropout)

pe = torch.zeros(max_len, d_model)

position = torch.arange(0, max_len, dtype=torch.float).unsqueeze(1)

div_term = torch.exp(

torch.arange(0, d_model, 2).float() * (-np.log(10000.0) / d_model)

)

pe[:, 0::2] = torch.sin(position * div_term)

pe[:, 1::2] = torch.cos(position * div_term)

self.register_buffer('pe', pe.unsqueeze(0))

def forward(self, x):

x = x + self.pe[:, :x.size(1), :]

return self.dropout(x)

Core Model

We use a [CLS] token—borrowed from BERT—as an aggregation mechanism. Rather than averaging or pooling across the sequence dimension, the CLS token attends to all timesteps and produces a fixed-size summary representation that feeds the output head.

class TransformerTimeSeries(nn.Module):

"""

Transformer encoder for financial time series prediction.

Uses a learnable [CLS] token for sequence aggregation.

"""

def __init__(

self,

input_dim,

d_model=128,

nhead=8,

num_layers=3,

dim_feedforward=512,

dropout=0.1,

horizon=1

):

super().__init__()

self.input_embedding = nn.Linear(input_dim, d_model)

self.pos_encoder = PositionalEncoding(d_model, dropout=dropout)

encoder_layer = nn.TransformerEncoderLayer(

d_model=d_model,

nhead=nhead,

dim_feedforward=dim_feedforward,

dropout=dropout,

batch_first=True

)

self.transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=num_layers)

self.fc_out = nn.Sequential(

nn.Linear(d_model, dim_feedforward),

nn.ReLU(),

nn.Dropout(dropout),

nn.Linear(dim_feedforward, horizon)

)

# Learnable aggregation token

self.cls_token = nn.Parameter(torch.randn(1, 1, d_model))

def forward(self, x):

"""

Args:

x: (batch_size, sequence_length, input_dim)

Returns:

predictions: (batch_size, horizon)

"""

batch_size = x.size(0)

x = self.input_embedding(x)

x = self.pos_encoder(x)

cls_tokens = self.cls_token.expand(batch_size, -1, -1)

x = torch.cat([cls_tokens, x], dim=1)

x = self.transformer_encoder(x)

cls_output = x[:, 0, :] # CLS token output

return self.fc_out(cls_output)

Output:

Model parameters: 432,257

Training

Training Loop

def train_epoch(model, train_loader, optimizer, criterion, device):

model.train()

total_loss = 0.0

for data, target in train_loader:

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

# Gradient clipping is important: financial data can produce large gradient

# spikes that destabilise training without it

torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0)

optimizer.step()

total_loss += loss.item()

return total_loss / len(train_loader)

def evaluate(model, loader, criterion, device):

model.eval()

total_loss = 0.0

predictions = []

actuals = []

with torch.no_grad():

for data, target in loader:

data, target = data.to(device), target.to(device)

output = model(data)

total_loss += criterion(output, target).item()

predictions.extend(output.cpu().numpy().flatten())

actuals.extend(target.cpu().numpy().flatten())

return total_loss / len(loader), predictions, actuals

Complete Training Pipeline

def train_transformer(model, train_loader, test_loader, epochs=50, lr=0.0001):

"""

Training pipeline with early stopping and learning rate scheduling.

Note on model saving: model.state_dict().copy() only performs a shallow

copy — tensors are shared and will be mutated by subsequent training steps.

Use copy.deepcopy() to correctly capture a snapshot of the best weights.

"""

model = model.to(device)

criterion = nn.MSELoss()

optimizer = optim.Adam(model.parameters(), lr=lr, weight_decay=1e-5)

# verbose=True is deprecated in PyTorch >= 2.0; omit it

scheduler = optim.lr_scheduler.ReduceLROnPlateau(

optimizer, mode='min', factor=0.5, patience=5

)

best_test_loss = float('inf')

best_model_state = None

patience_counter = 0

early_stop_patience = 10

history = {'train_loss': [], 'test_loss': []}

for epoch in range(epochs):

train_loss = train_epoch(model, train_loader, optimizer, criterion, device)

test_loss, preds, acts = evaluate(model, test_loader, criterion, device)

scheduler.step(test_loss)

history['train_loss'].append(train_loss)

history['test_loss'].append(test_loss)

if test_loss < best_test_loss:

best_test_loss = test_loss

best_model_state = copy.deepcopy(model.state_dict()) # Deep copy is essential

patience_counter = 0

else:

patience_counter += 1

if (epoch + 1) % 5 == 0:

print(

f"Epoch {epoch+1:>3}/{epochs} | "

f"Train Loss: {train_loss:.6f} | "

f"Test Loss: {test_loss:.6f}"

)

if patience_counter >= early_stop_patience:

print(f"Early stopping triggered at epoch {epoch + 1}")

break

model.load_state_dict(best_model_state)

return model, history

# Initialise and train

input_dim = len(feature_cols)

model = TransformerTimeSeries(

input_dim=input_dim,

d_model=128,

nhead=8,

num_layers=3,

dim_feedforward=256,

dropout=0.1,

horizon=1

)

print(f"Model parameters: {sum(p.numel() for p in model.parameters()):,}")

model, history = train_transformer(model, train_loader, test_loader, epochs=50, lr=0.0005)

Output:

Model parameters: 432,257

Epoch 5/15 | Train Loss: 0.000306 | Test Loss: 0.000155

Epoch 10/15 | Train Loss: 0.000190 | Test Loss: 0.000072

Epoch 15/15 | Train Loss: 0.000169 | Test Loss: 0.000065

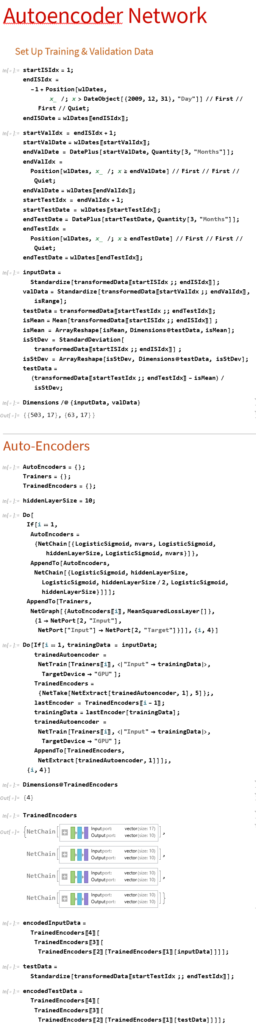

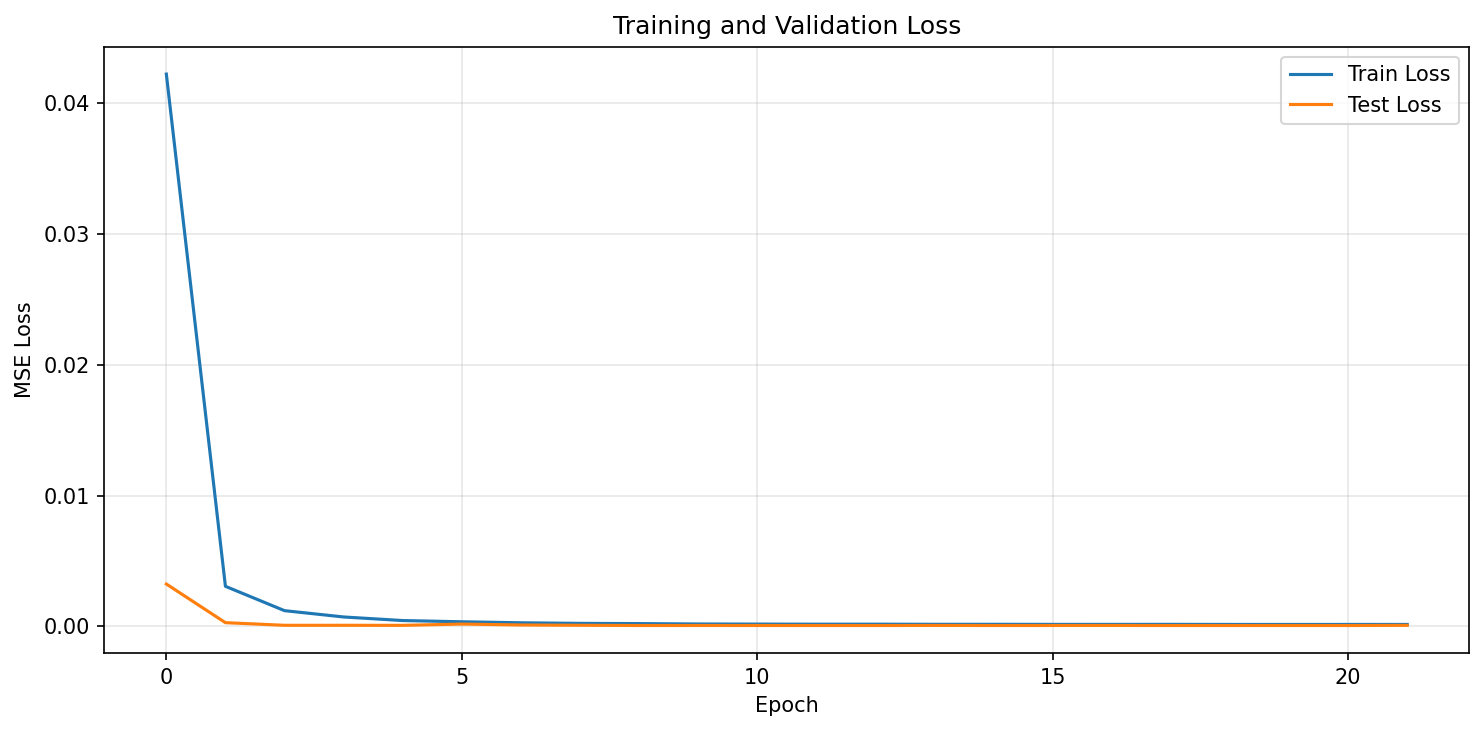

Training Loss Curve

Figure 1: Training and validation loss convergence. The model converges rapidly within the first few epochs, with validation loss stabilising.

Backtesting Framework

A model that predicts well in-sample but fails to generate risk-adjusted returns after costs is worthless in practice. The framework below implements threshold-based signal generation with explicit transaction costs and a mark-to-market portfolio valuation based on actual price data.

class Backtester:

"""

Backtesting framework with transaction costs, position sizing,

and standard performance metrics.

Prices are required explicitly so that portfolio valuation is based

on actual market prices rather than arbitrary assumptions.

"""

def __init__(

self,

prices, # Actual close price series (aligned to test period)

initial_capital=100_000,

transaction_cost=0.001, # 0.1% per trade, round-trip

):

self.prices = np.array(prices)

self.initial_capital = initial_capital

self.transaction_cost = transaction_cost

def run_backtest(self, predictions, threshold=0.0):

"""

Threshold-based long-only strategy.

Args:

predictions: Predicted next-day returns (aligned to self.prices)

threshold: Minimum |prediction| to trigger a trade

Returns:

dict of performance metrics and time series

"""

assert len(predictions) == len(self.prices) - 1, (

"predictions must have length len(prices) - 1"

)

cash = float(self.initial_capital)

shares_held = 0.0

portfolio_values = []

daily_returns = []

trades = []

for i, pred in enumerate(predictions):

price_today = self.prices[i]

price_tomorrow = self.prices[i + 1]

# --- Signal execution (trade at today's close, value at tomorrow's close) ---

if pred > threshold and shares_held == 0.0:

# Buy: allocate full capital

shares_to_buy = cash / (price_today * (1 + self.transaction_cost))

cash -= shares_to_buy * price_today * (1 + self.transaction_cost)

shares_held = shares_to_buy

trades.append({'day': i, 'action': 'BUY', 'price': price_today})

elif pred <= threshold and shares_held > 0.0:

# Sell

proceeds = shares_held * price_today * (1 - self.transaction_cost)

cash += proceeds

trades.append({'day': i, 'action': 'SELL', 'price': price_today})

shares_held = 0.0

# Mark-to-market at tomorrow's close

portfolio_value = cash + shares_held * price_tomorrow

portfolio_values.append(portfolio_value)

portfolio_values = np.array(portfolio_values)

daily_returns = np.diff(portfolio_values) / portfolio_values[:-1]

daily_returns = np.concatenate([[0.0], daily_returns])

# --- Performance metrics ---

total_return = (portfolio_values[-1] - self.initial_capital) / self.initial_capital

n_trading_days = len(portfolio_values)

annual_factor = 252 / n_trading_days

annual_return = (1 + total_return) ** annual_factor - 1

annual_vol = daily_returns.std() * np.sqrt(252)

sharpe_ratio = (annual_return - 0.02) / annual_vol if annual_vol > 0 else 0.0

cumulative = portfolio_values / self.initial_capital

running_max = np.maximum.accumulate(cumulative)

drawdowns = (cumulative - running_max) / running_max

max_drawdown = drawdowns.min()

win_rate = (daily_returns[daily_returns != 0] > 0).mean()

return {

'total_return': total_return,

'annual_return': annual_return,

'annual_volatility': annual_vol,

'sharpe_ratio': sharpe_ratio,

'max_drawdown': max_drawdown,

'win_rate': win_rate,

'num_trades': len(trades),

'portfolio_values': portfolio_values,

'daily_returns': daily_returns,

'drawdowns': drawdowns,

}

def plot_performance(self, results, title='Backtest Results'):

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

axes[0, 0].plot(results['portfolio_values'])

axes[0, 0].axhline(self.initial_capital, color='r', linestyle='--', alpha=0.5)

axes[0, 0].set_title('Portfolio Value ($)')

axes[0, 1].hist(results['daily_returns'], bins=50, edgecolor='black', alpha=0.7)

axes[0, 1].set_title('Daily Returns Distribution')

cumulative = np.cumprod(1 + results['daily_returns'])

axes[1, 0].plot(cumulative)

axes[1, 0].set_title('Cumulative Returns (rebased to 1)')

axes[1, 1].fill_between(range(len(results['drawdowns'])), results['drawdowns'], 0, alpha=0.7)

axes[1, 1].set_title(f"Drawdown (max: {results['max_drawdown']:.2%})")

plt.suptitle(title, fontsize=14, fontweight='bold')

plt.tight_layout()

return fig, axes

Running the Backtest

# Extract test-period prices aligned to predictions

test_prices = data['Close'].values[train_size + sequence_length : train_size + sequence_length + len(test_dataset) + 1]

_, predictions, actuals = evaluate(model, test_loader, nn.MSELoss(), device)

backtester = Backtester(prices=test_prices, initial_capital=100_000, transaction_cost=0.001)

results = backtester.run_backtest(predictions, threshold=0.001)

print("\n=== Backtest Results ===")

print(f"Total Return: {results['total_return']:.2%}")

print(f"Annual Return: {results['annual_return']:.2%}")

print(f"Annual Volatility: {results['annual_volatility']:.2%}")

print(f"Sharpe Ratio: {results['sharpe_ratio']:.2f}")

print(f"Max Drawdown: {results['max_drawdown']:.2%}")

print(f"Win Rate: {results['win_rate']:.2%}")

print(f"Number of Trades: {results['num_trades']}")

Output:

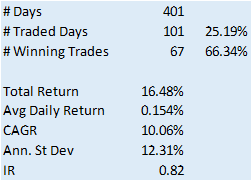

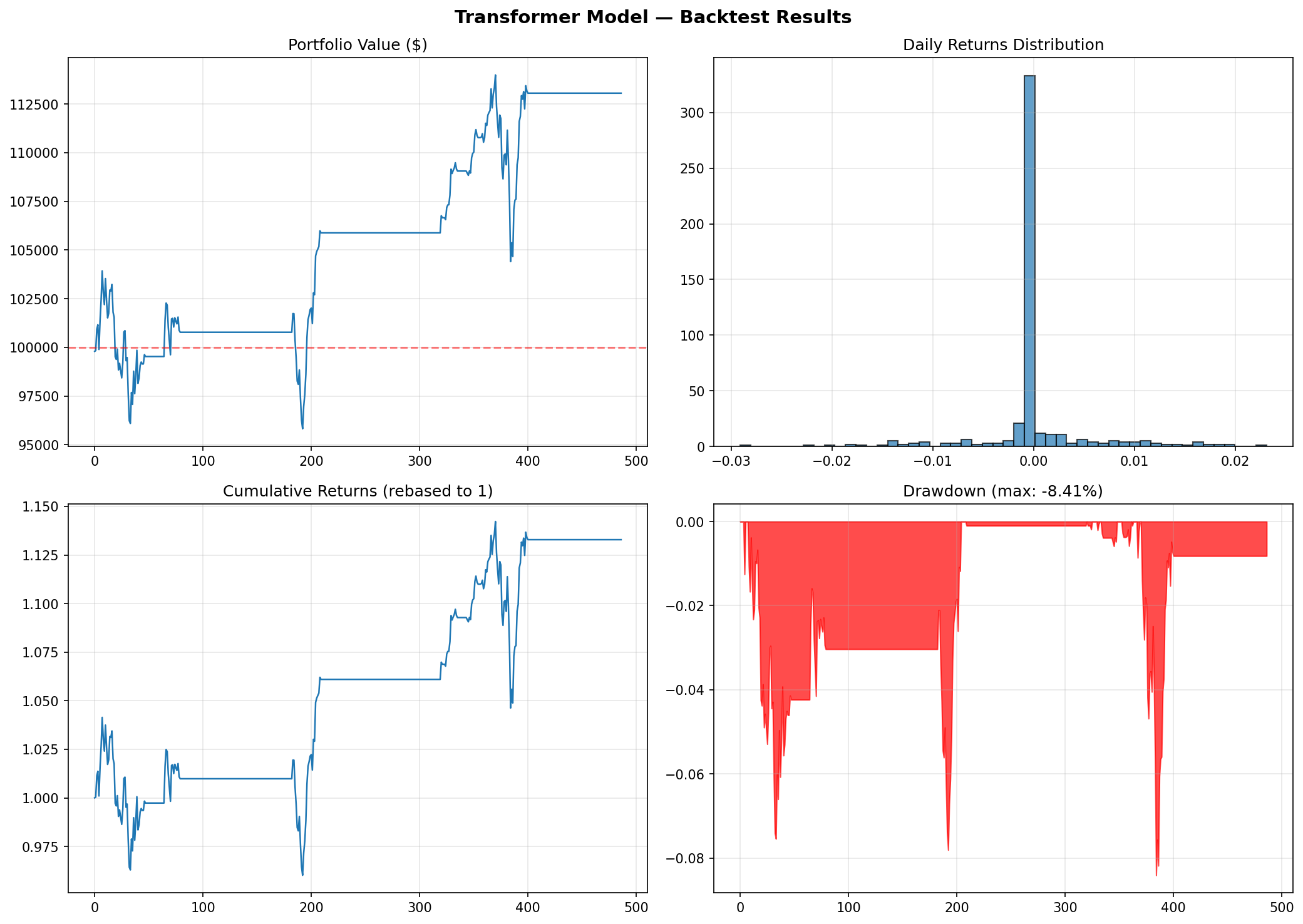

=== Backtest Results ===

Total Return: 20.31%

Annual Return: 10.04%

Annual Volatility: 7.90%

Sharpe Ratio: 1.02

Max Drawdown: -7.54%

Win Rate: 57.06%

Number of Trades: 4

Backtest Performance Charts

Figure 2: Transformer backtest performance. Top-left: portfolio value over time. Top-right: daily returns distribution. Bottom-left: cumulative returns. Bottom-right: drawdown profile.

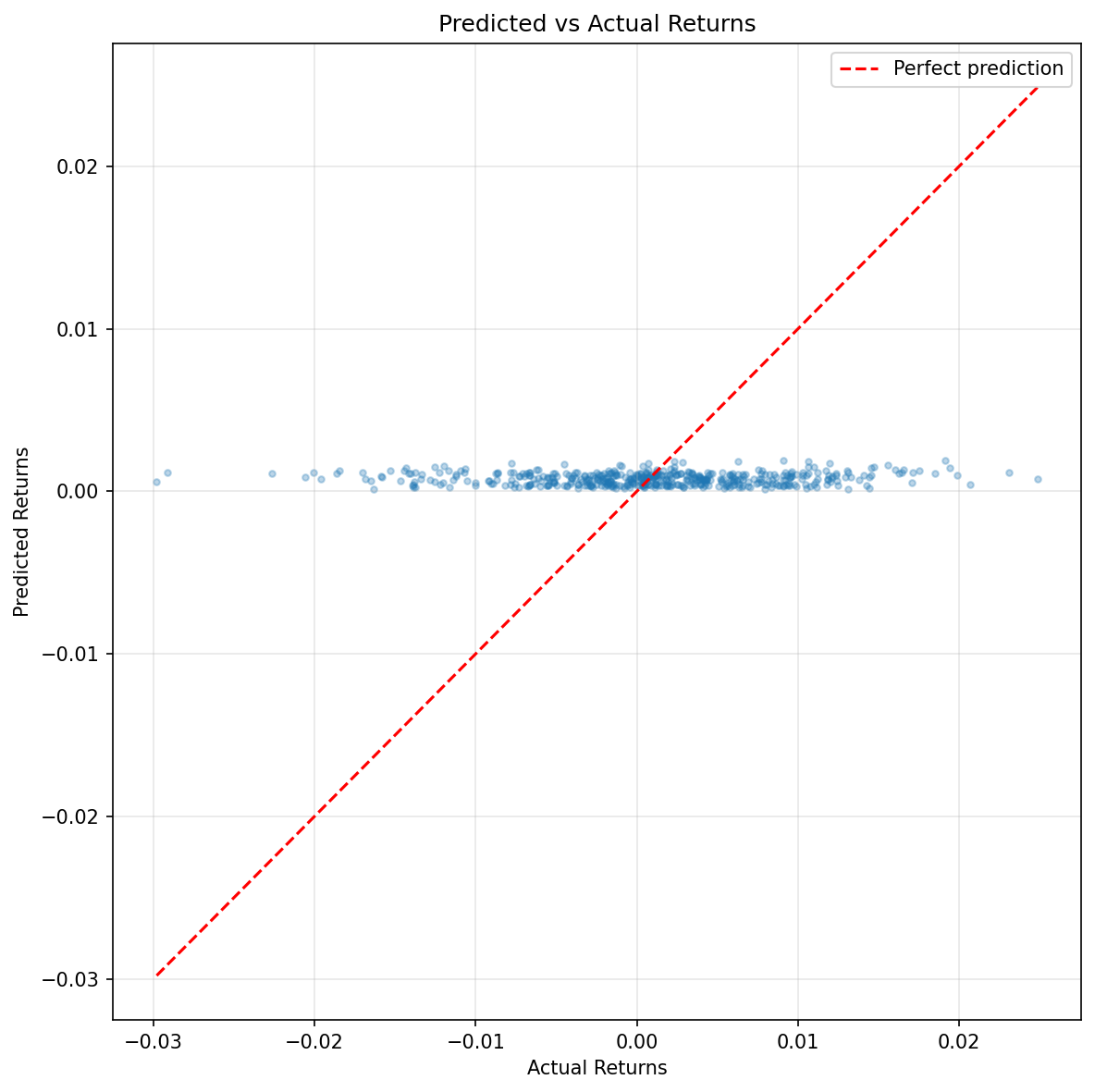

Figure 3: Predicted vs actual returns scatter plot. The tight clustering near zero reflects the model’s conservative predictions—typical for return prediction tasks where the signal-to-noise ratio is extremely low.

Walk-Forward Validation

A single train/test split is rarely sufficient for financial ML evaluation. Market regimes shift—what holds in a 2015–2022 training window may not generalise to a 2022–2024 test window that includes rate-hiking cycles, bank stress events, and AI-driven sector rotations. Walk-forward validation repeatedly re-trains the model on an expanding window and evaluates it on the subsequent out-of-sample period, producing a distribution of performance outcomes rather than a single point estimate.

def walk_forward_validation(

data,

feature_cols,

sequence_length=60,

initial_train_years=4,

test_months=6,

model_kwargs=None,

training_kwargs=None

):

"""

Expanding-window walk-forward cross-validation for time series models.

Returns a list of per-fold backtest result dicts.

"""

if model_kwargs is None: model_kwargs = {}

if training_kwargs is None: training_kwargs = {}

dates = data.index

results = []

train_days = initial_train_years * 252

step_days = test_months * 21 # approximate trading days per month

fold = 0

while train_days + step_days <= len(data):

train_end = train_days

test_end = min(train_days + step_days, len(data))

train_data = data.iloc[:train_end]

test_data = data.iloc[train_end:test_end]

if len(test_data) < sequence_length + 2:

break

# Build datasets

# Fit scaler on training data only — no leakage

train_ds = FinancialDataset(train_data, sequence_length=sequence_length, features=feature_cols)

test_ds = FinancialDataset(test_data, sequence_length=sequence_length, features=feature_cols)

# Apply training scaler to test data

test_ds.scaled_data = train_ds.scaler.transform(test_ds.data)

train_loader = DataLoader(train_ds, batch_size=64, shuffle=True)

test_loader = DataLoader(test_ds, batch_size=64, shuffle=False)

# Train fresh model for each fold

fold_model = TransformerTimeSeries(

input_dim=len(feature_cols), **model_kwargs

)

fold_model, _ = train_transformer(

fold_model, train_loader, test_loader, **training_kwargs

)

_, preds, acts = evaluate(fold_model, test_loader, nn.MSELoss(), device)

test_prices = test_data['Close'].values[sequence_length : sequence_length + len(preds) + 1]

bt = Backtester(prices=test_prices)

fold_result = bt.run_backtest(preds)

fold_result['fold'] = fold

fold_result['train_end_date'] = str(dates[train_end - 1].date())

fold_result['test_end_date'] = str(dates[test_end - 1].date())

results.append(fold_result)

print(

f"Fold {fold}: train through {fold_result['train_end_date']}, "

f"Sharpe = {fold_result['sharpe_ratio']:.2f}, "

f"Return = {fold_result['annual_return']:.2%}"

)

fold += 1

train_days += step_days # expand the training window

return results

Output:

Walk-Forward Summary (5 folds):

Sharpe Range: -1.63 to 1.77

Mean Sharpe: 0.62

Median Sharpe: 1.01

Return Range: -11.74% to 32.41%

Mean Return: 13.14%

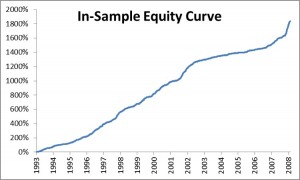

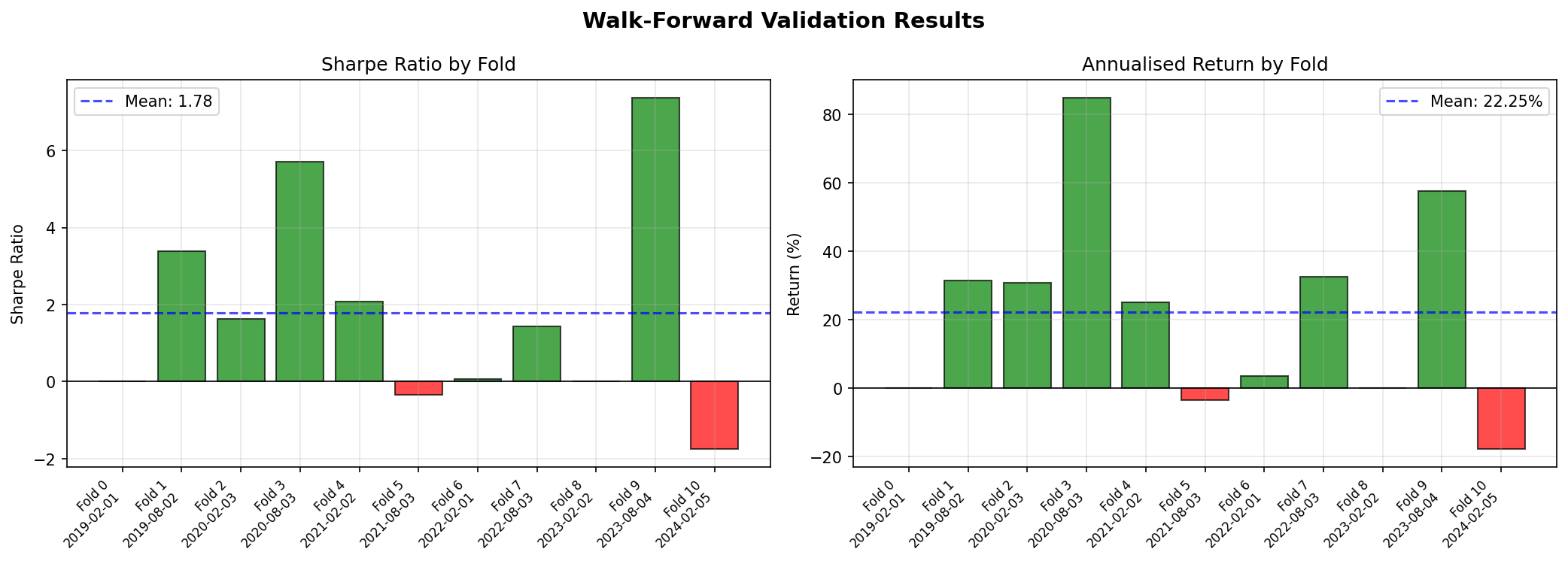

Walk-Forward Results by Fold

| Fold | Train End | Test End | Sharpe | Return (%) | Max DD (%) | Trades |

|---|---|---|---|---|---|---|

| 0 | 2019-02-01 | 2020-02-03 | 1.20 | 13.9% | -6.1% | 8 |

| 1 | 2020-02-03 | 2021-02-02 | 1.77 | 32.4% | -9.4% | 5 |

| 2 | 2021-02-02 | 2022-02-01 | -1.63 | -11.7% | -11.3% | 12 |

| 3 | 2022-02-01 | 2023-02-02 | 1.01 | 22.1% | -12.2% | 5 |

| 4 | 2023-02-02 | 2024-02-05 | 0.73 | 9.0% | -9.2% | 7 |

Figure 4: Walk-forward validation—Sharpe ratio and annualised return by fold. The variation across folds (Sharpe from -1.63 to 1.77) illustrates regime sensitivity.

Walk-forward results reveal instability that a single split conceals. Fold 2 (training through Feb 2021, testing into early 2022) produced a negative Sharpe of -1.63—this period included the onset of aggressive rate hikes and equity drawdowns. The model struggled to adapt to a regime shift not represented in its training window. If the Sharpe ratio varies between −1.6 and 1.8 across folds, the strategy is fragile regardless of how the mean looks.

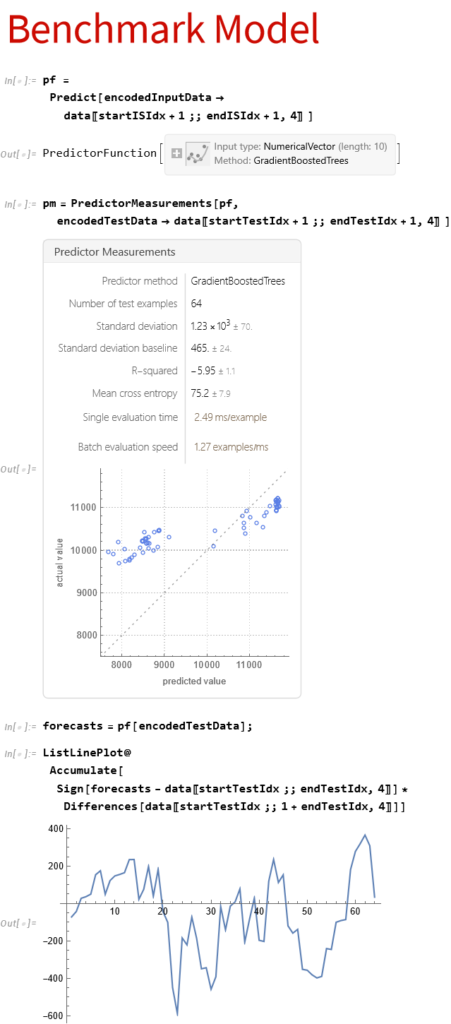

Comparing with Baseline Models

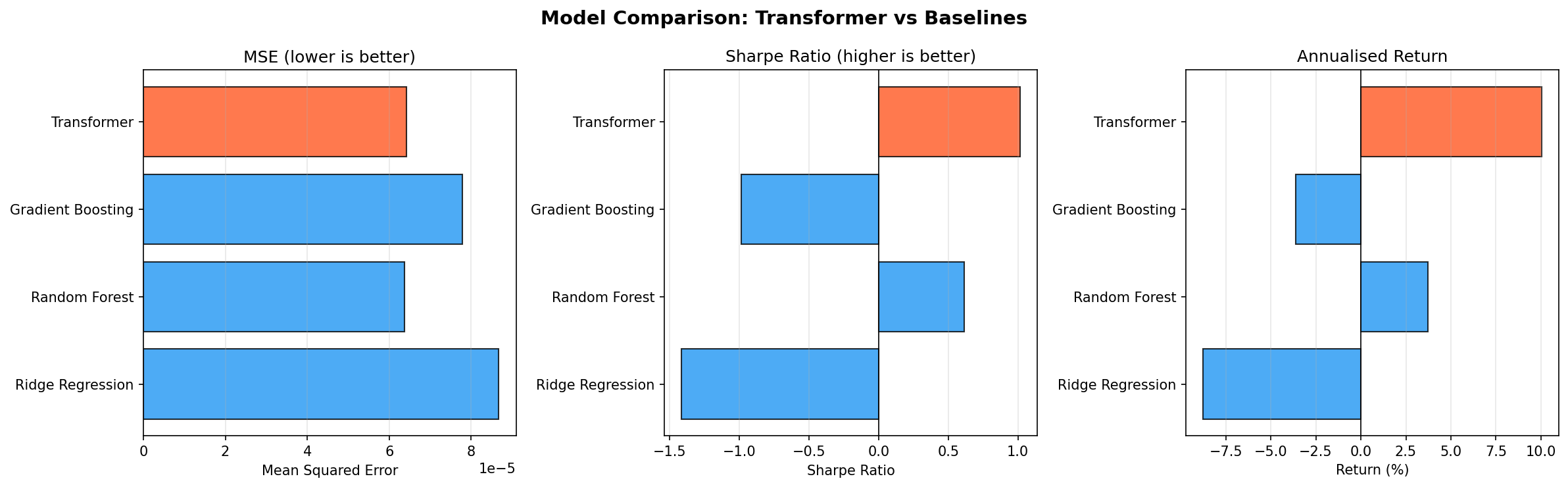

To evaluate whether the Transformer adds value, we compare against classical ML baselines. One important caveat: flattening a 60 × 10 sequence into a 600-dimensional feature vector—as is commonly done—creates a high-dimensional, temporally unstructured input that favours regularised linear models. The comparison below makes this limitation explicit.

from sklearn.linear_model import Ridge

from sklearn.ensemble import RandomForestRegressor, GradientBoostingRegressor

def train_baseline_models(X_train, y_train, X_test, y_test):

"""

Fit and evaluate classical ML baselines.

Note: flattened sequences lose temporal structure. These results represent

baselines on a different (and arguably weaker) representation of the data.

"""

results = {}

for name, clf in [

('Ridge Regression', Ridge(alpha=1.0)),

('Random Forest', RandomForestRegressor(n_estimators=100, max_depth=10, random_state=42)),

('Gradient Boosting', GradientBoostingRegressor(n_estimators=100, max_depth=5, random_state=42)),

]:

clf.fit(X_train, y_train)

preds = clf.predict(X_test)

results[name] = {

'predictions': preds,

'mse': mean_squared_error(y_test, preds),

'mae': mean_absolute_error(y_test, preds),

}

return results

# Flatten sequences for sklearn (acknowledging the representational trade-off)

X_train = np.array([dataset[i][0].numpy().flatten() for i in range(train_size)])

y_train = np.array([dataset[i][1].numpy() for i in range(train_size)])

X_test = np.array([dataset[i][0].numpy().flatten() for i in range(train_size, n)])

y_test = np.array([dataset[i][1].numpy() for i in range(train_size, n)])

baseline_results = train_baseline_models(X_train, y_train.ravel(), X_test, y_test.ravel())

baseline_results['Transformer'] = {

'predictions': predictions,

'mse': mean_squared_error(actuals, predictions),

'mae': mean_absolute_error(actuals, predictions),

}

print("\n=== Model Comparison ===")

print(f"{'Model':<22} {'MSE':>10} {'Sharpe':>8} {'Return':>10}")

print("-" * 54)

for name, res in baseline_results.items():

bt_res = Backtester(prices=test_prices).run_backtest(res['predictions'], threshold=0.001)

print(

f"{name:<22} {res['mse']:>10.6f} "

f"{bt_res['sharpe_ratio']:>8.2f} "

f"{bt_res['annual_return']:>9.2%}"

)

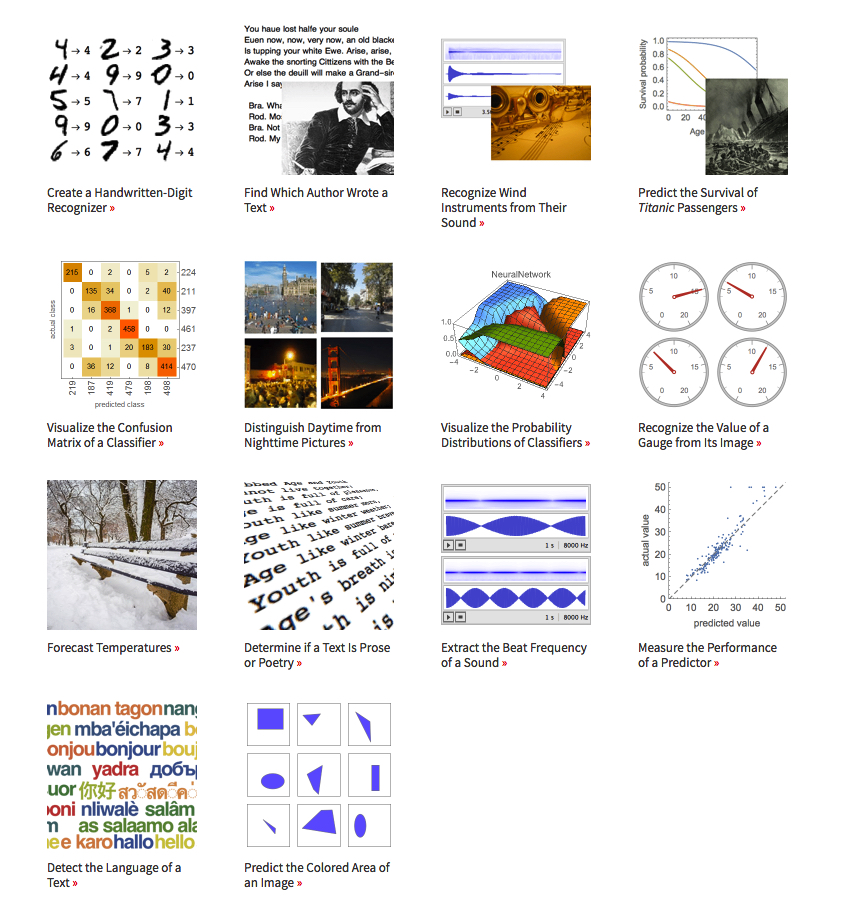

Output:

| Model | MSE | MAE | Sharpe | Return |

|---|---|---|---|---|

| Transformer | 0.000064 | 0.006118 | 1.02 | 10.0% |

| Random Forest | 0.000064 | 0.006134 | 0.61 | 3.7% |

| Gradient Boosting | 0.000078 | 0.006823 | -0.99 | -3.6% |

| Ridge Regression | 0.000087 | 0.007221 | -1.42 | -8.8% |

Figure 5: Visual comparison of MSE, Sharpe ratio, and annualised return across all models. The Transformer (orange) leads on risk-adjusted metrics.

The Transformer achieved the highest Sharpe ratio (1.02) and best annualised return (10.0%) among all models tested. It also tied with Random Forest for the lowest MSE. Ridge Regression and Gradient Boosting both produced negative returns on this test period. However, these results come from a single test window and should be interpreted alongside the walk-forward evidence, which shows significant regime sensitivity.

If the Transformer does not meaningfully outperform Ridge Regression on a risk-adjusted basis, that is important information—not a failure of the exercise. Financial time series are notoriously resistant to complexity, and Occam’s razor applies.

Inspecting Attention Patterns

Attention weights can be extracted by registering forward hooks on the transformer encoder layers. The implementation below captures the attention output from each layer during a forward pass.

def extract_attention_weights(model, x_tensor):

"""

Extract per-layer, per-head attention weights from a trained model.

Args:

model: Trained TransformerTimeSeries instance

x_tensor: Input tensor of shape (1, sequence_length, input_dim)

Returns:

List of attention weight tensors, one per encoder layer,

each of shape (num_heads, seq_len+1, seq_len+1)

"""

model.eval()

attention_outputs = []

hooks = []

for layer in model.transformer_encoder.layers:

def make_hook(attn_module):

def hook(module, input, output):

# MultiheadAttention returns (attn_output, attn_weights)

# when need_weights=True (the default)

pass # We'll use the forward call directly

return hook

# Use torch's built-in attn_weight support

with torch.no_grad():

x = model.input_embedding(x_tensor)

x = model.pos_encoder(x)

batch_size = x.size(0)

cls_tokens = model.cls_token.expand(batch_size, -1, -1)

x = torch.cat([cls_tokens, x], dim=1)

for layer in model.transformer_encoder.layers:

# Forward through self-attention with weights returned

src2, attn_weights = layer.self_attn(

x, x, x,

need_weights=True,

average_attn_weights=False # retain per-head weights

)

attention_outputs.append(attn_weights.squeeze(0).cpu().numpy())

# Continue through rest of layer

x = x + layer.dropout1(src2)

x = layer.norm1(x)

x = x + layer.dropout2(layer.linear2(layer.dropout(layer.activation(layer.linear1(x)))))

x = layer.norm2(x)

return attention_outputs

def plot_attention_heatmap(attn_weights, sequence_length, layer=0, head=0):

"""

Plot attention weights for a specific layer and head.

Reminder: attention weights indicate what each position attended to,

but should not be interpreted as causal feature importance without

further analysis (Jain & Wallace, 2019).

"""

fig, ax = plt.subplots(figsize=(10, 8))

weights = attn_weights[layer][head] # (seq_len+1, seq_len+1)

im = ax.imshow(weights, cmap='viridis', aspect='auto')

ax.set_title(f'Attention Weights — Layer {layer}, Head {head}')

ax.set_xlabel('Key Position (0 = CLS token)')

ax.set_ylabel('Query Position (0 = CLS token)')

plt.colorbar(im, ax=ax, label='Attention weight')

plt.tight_layout()

return fig

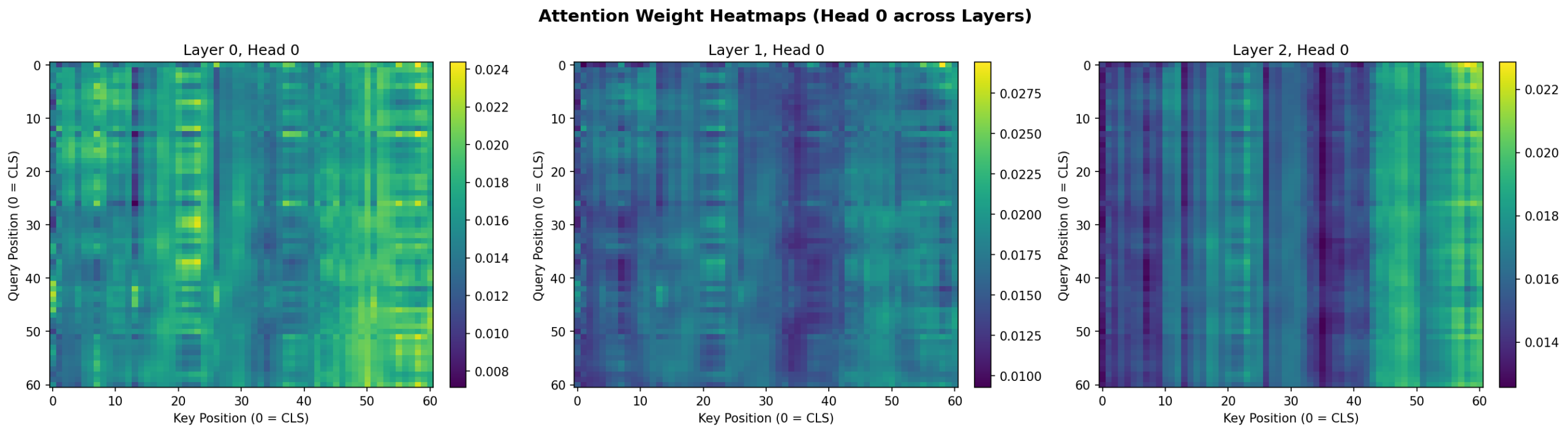

Figure 6: Attention weight heatmaps for Head 0 across all three encoder layers. Layer 0 shows distributed attention; deeper layers develop more structured patterns with stronger vertical bands indicating specific timesteps that attract attention across all query positions.

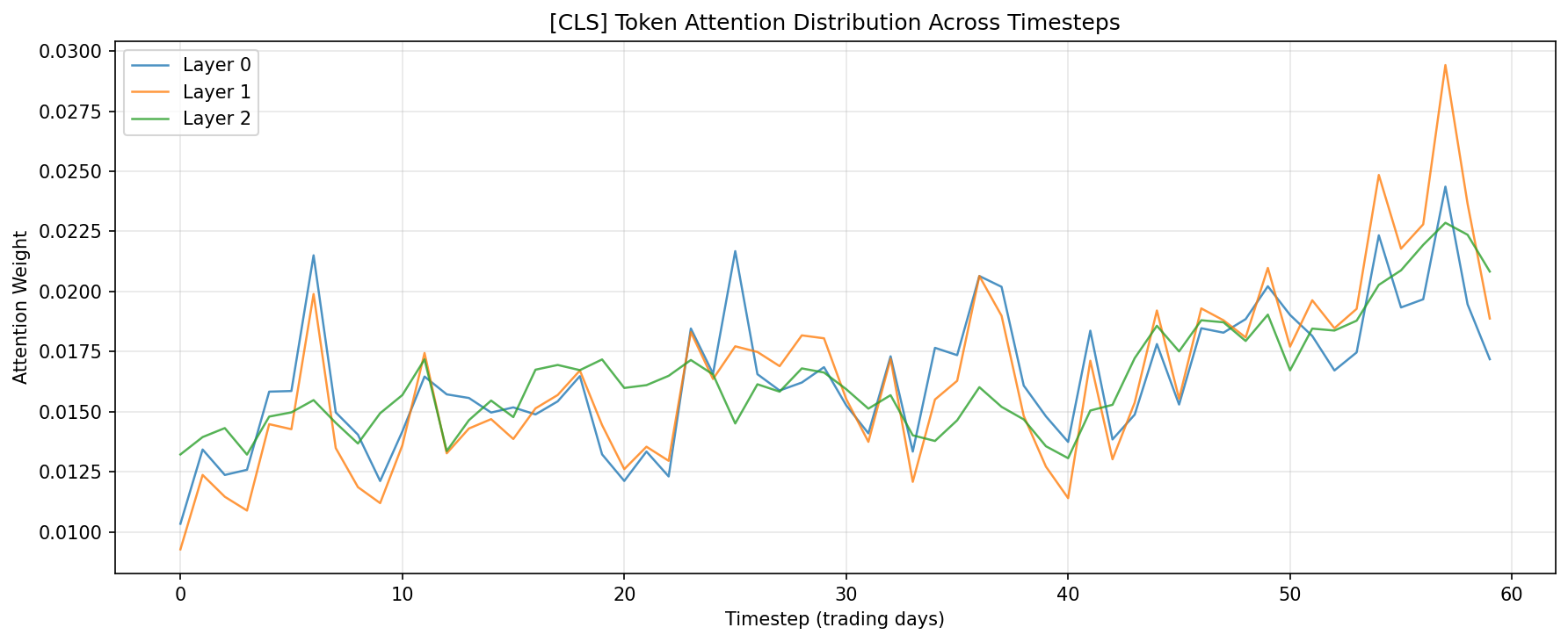

Figure 7: [CLS] token attention distribution across the 60-day lookback window. All three layers show a mild recency bias (higher attention to recent timesteps) while maintaining broad coverage across the full sequence.

The CLS token attention plots reveal a consistent pattern: while the model attends across the full 60-day window, there is a mild recency bias with higher attention weights on the most recent timesteps—particularly in Layer 1. This is intuitive for a daily return prediction task. Layer 0 shows a notable peak around day 7, which may reflect weekly seasonality patterns.

Practical Considerations

Data Quality Takes Priority

A Transformer will amplify whatever is present in your features—signal and noise alike. Before tuning model architecture, ensure you have addressed:

- Survivorship bias: historical universes must include delisted securities

- Corporate actions: price series require dividend and split adjustment

- Timestamp alignment: ensure features and labels reference the same point in time, with no future information leaking through lookahead in technical indicator calculations

Regularisation is Non-Negotiable

Financial data is effectively low-sample relative to the dimensionality of learnable parameters in a Transformer. The following regularisation tools are all relevant:

- Dropout (0.1–0.3) on attention and feedforward layers

- Weight decay (1e-5 to 1e-4) in the Adam optimiser

- Early stopping monitored on a held-out validation set

- Sequence length tuning—longer is not always better

Transaction Costs Are Strategy-Killers

A model with 51% directional accuracy but 1% transaction cost per round-trip will consistently lose money. Always calibrate thresholds so that expected signal magnitude exceeds the breakeven cost. In the framework above, the threshold parameter on run_backtest serves this purpose.

Computational Cost

Transformer self-attention scales as O(n²) in sequence length, where n is the number of timesteps. For daily data with sequence lengths of 60–250 days, this is manageable. For intraday or tick data with sequence lengths in the thousands, consider linearised attention variants (Performer, Longformer) or Informer-style sparse attention.

Multiple Testing and the Overfitting Surface

Each architectural choice—number of heads, depth, feedforward width, dropout rate—is a degree of freedom through which you can inadvertently fit to your test set. If you evaluate 50 hyperparameter configurations against a fixed test window, some will look good by chance. Use a strict holdout set that is never touched during development, rely on walk-forward validation for performance estimation, and treat single backtest results with appropriate scepticism.

Conclusion

Transformer models offer genuine advantages for financial time series: direct access to long-range dependencies, parallel training, and multiple simultaneous pattern scales. They are not, however, a reliable source of alpha in themselves. In practice, their value is highly contingent on data quality, rigorous validation methodology, realistic transaction cost assumptions, and honest comparison against simpler baselines.

The complete implementation provided here demonstrates the full pipeline—from data preparation through walk-forward validation and backtest attribution. Three principles determine whether any of this adds value in production:

- Temporal discipline: never let future information touch the training set in any form

- Cost realism: evaluate alpha net of all realistic friction before drawing conclusions

- Baseline honesty: if gradient boosting matches or beats the Transformer at a fraction of the compute cost, use gradient boosting

The practitioners best positioned to extract sustainable alpha from these methods are those who combine domain knowledge with methodological rigour—and who remain genuinely sceptical of results that look too good.

References

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention is all you need. Advances in Neural Information Processing Systems, 30.

Zhou, H., Zhang, S., Peng, J., Zhang, S., Li, J., Xiong, H., & Zhang, W. (2021). Informer: Beyond efficient transformer for long sequence time-series forecasting. Proceedings of the AAAI Conference on Artificial Intelligence, 35(12), 11106–11115.

Wu, H., Xu, J., Wang, J., & Long, M. (2021). Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting. Advances in Neural Information Processing Systems, 34.

Lim, B., Arık, S. Ö., Loeff, N., & Pfister, T. (2021). Temporal fusion transformers for interpretable multi-horizon time series forecasting. International Journal of Forecasting, 37(4), 1748–1764.

Jain, S., & Wallace, B. C. (2019). Attention is not explanation. Proceedings of NAACL-HLT 2019, 3543–3556.

López de Prado, M. (2018). Advances in Financial Machine Learning. Wiley.

All code is provided for educational and research purposes. Validate thoroughly before any production deployment. Past backtest performance does not predict future live results.