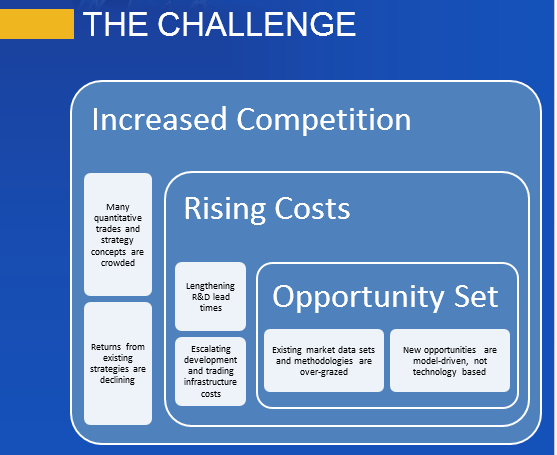

Anyone active in the quantitative space will tell you that it has become a great deal more competitive in recent years. Many quantitative trades and strategies are a lot more crowded than they used to be and returns from existing strategies are on the decline.

THE CHALLENGE

Meanwhile, costs have been steadily rising, as the technology arms race has accelerated, with more money being spent on hardware, communications and software than ever before. As lead times to develop new strategies have risen, the cost of acquiring and maintaining expensive development resources have spiraled upwards. It is getting harder to find new, profitable strategies, due in part to the over-grazing of existing methodologies and data sets (like the E-Mini futures, for example). There has, too, been a change in the direction of quantitative research in recent years. Where once it was simply a matter of acquiring the fastest pipe to as many relevant locations as possible, the marginal benefit of each extra $ spent on infrastructure has since fallen rapidly. New strategy research and development is now more model-driven than technology driven.

THE OPPORTUNITY

What is needed at this point is a new approach: one that accelerates the process of identifying new alpha signals, prototyping and testing new strategies and bringing them into production, leveraging existing battle-tested technologies and trading platforms.

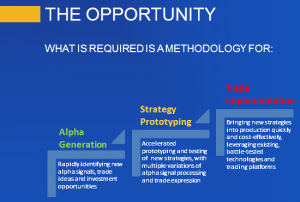

GENETIC PROGRAMMING

Genetic programming, which has been around since the 1990’s when its use was pioneered in proteomics, enjoys significant advantages over traditional research and development methodologies.

GP is an evolutionary-based algorithmic methodology in which a system is given a set of simple rules, some data, and a fitness function that produces desired outcomes from combining the rules and applying them to the data. The idea is that, by testing large numbers of possible combinations of rules, typically in the millions, and allowing the most successful rules to propagate, eventually we will arrive at a strategy solution that offers the required characteristics.

ADVANTAGES OF GENETIC PROGRAMMING

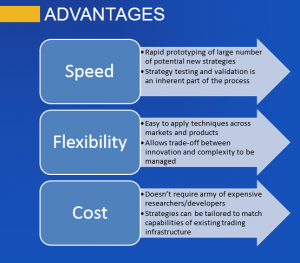

The potential benefits of the GP approach are considerable: not only are strategies developed much more quickly and cost effectively (the price of some software and a single CPU vs. a small army of developers), the process is much more flexible. The inflexibility of the traditional approach to R&D is one of its principle shortcomings. The researcher produces a piece of research that is subsequently passed on to the development team. Developers are usually extremely rigid in their approach: when asked to deliver X, they will deliver X, not some variation on X. Unfortunately research is not an exact science: what looks good in a back-test environment may not pass muster when implemented in live trading. So researchers need to “iterate around” the idea, trying different combinations of entry and exit logic, for example, until they find a variant that works. Developers are lousy at this; GP systems excel at it.

The potential benefits of the GP approach are considerable: not only are strategies developed much more quickly and cost effectively (the price of some software and a single CPU vs. a small army of developers), the process is much more flexible. The inflexibility of the traditional approach to R&D is one of its principle shortcomings. The researcher produces a piece of research that is subsequently passed on to the development team. Developers are usually extremely rigid in their approach: when asked to deliver X, they will deliver X, not some variation on X. Unfortunately research is not an exact science: what looks good in a back-test environment may not pass muster when implemented in live trading. So researchers need to “iterate around” the idea, trying different combinations of entry and exit logic, for example, until they find a variant that works. Developers are lousy at this; GP systems excel at it.

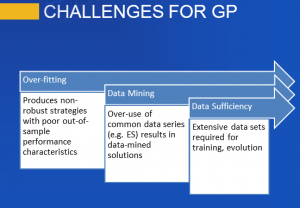

CHALLENGES FOR THE GENETIC PROGRAMMING APPROACH

So enticing are the potential benefits of GP that it begs the question as to why the approach hasn’t been adopted more widely. One reason is the strong preference amongst researchers for an understandable – and testable – investment thesis. Researchers – and, more importantly, investors – are much more comfortable if they can articulate the premise behind a strategy. Even if a trade turns out to be a loser, we are generally more comfortable buying a stock on the supposition of, say, a positive outcome of a pending drug trial, than we are if required to trust the judgment of a black box, whose criteria are inherently unobservable.

Added to this, the GP approach suffers from three key drawbacks: data sufficiency, data mining and over-fitting. These are so well known that they hardly require further rehearsal. There have been many adverse outcomes resulting from poorly designed mechanical systems curve fitted to the data. Anyone who was active in the space in the 1990s will recall the hype over neural networks and the over-exaggerated claims made for their efficacy in trading system design. Genetic Programming, a far more general and powerful concept, suffered unfairly from the ensuing adverse publicity, although it does face many of the same challenges.

A NEW APPROACH

I began working in the field of genetic programming in the 1990’s, with my former colleague Haftan Eckholdt, at that time head of neuroscience at Yeshiva University, and we founded a hedge fund, Proteom Capital, based on that approach (large due to Haftan’s research). I and my colleagues at Systematic Strategies have continued to work on GP related ideas over the last twenty years, and during that period we have developed a methodology that address the weaknesses that have held back genetic programming from widespread adoption.

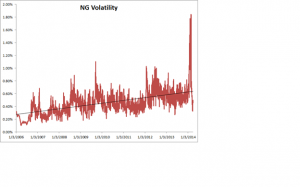

Firstly, we have evolved methods for transforming original data series that enables us to avoid over-using the same old data-sets and, more importantly, allows new patterns to be revealed in the underlying market structure. This effectively eliminates the data mining bias that has plagued the GP approach. At the same time, because our process produces a stronger signal relative to the background noise, we consume far less data – typically no more than a couple of years worth.

Secondly, we have found we can enhance the robustness of prototype strategies by using double-blind testing: i.e. data sets on which the performance of the model remains unknown to the machine, or the researcher, prior to the final model selection.

Finally, we are able to test not only the alpha signal, but also multiple variations of the trade expression, including different types of entry and exit logic, as well as profit targets and stop loss constraints.

OUTCOMES: ROBUST, PROFITABLE STRATEGIES

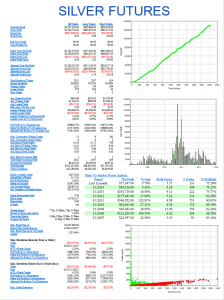

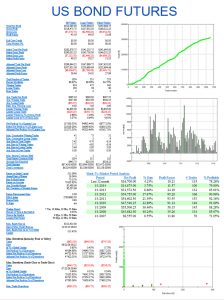

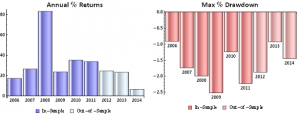

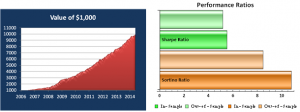

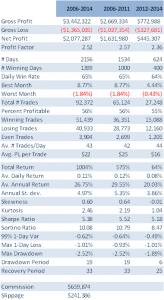

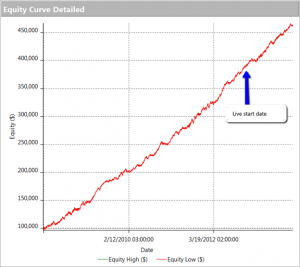

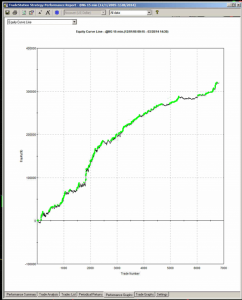

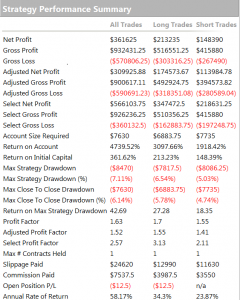

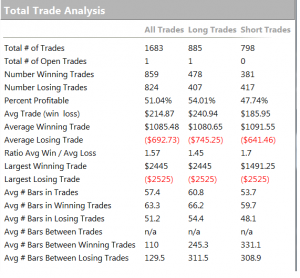

Taken together, these measures enable our GP system to produce strategies that not only have very high performance characteristics, but are also extremely robust. So, for example, having constructed a model using data only from the continuing bull market in equities in 2012 and 2013, the system is nonetheless capable of producing strategies that perform extremely well when tested out of sample over the highly volatility bear market conditions of 2008/09.

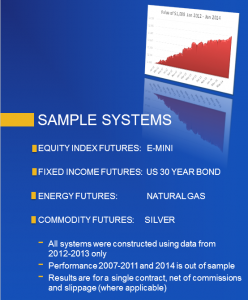

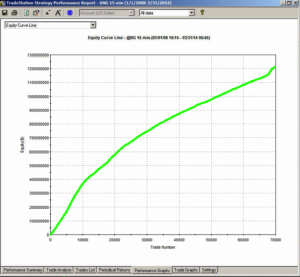

So stable are the results produced by many of the strategies, and so well risk-controlled, that it is possible to deploy leveraged money-managed techniques, such as Vince’s fixed fractional approach. Money management schemes take advantage of the high level of consistency in performance to increase the capital allocation to the strategy in a way that boosts returns without incurring a high risk of catastrophic loss. You can judge the benefits of applying these kinds of techniques in some of the strategies we have developed in equity, fixed income, commodity and energy futures which are described below.

CONCLUSION

After 20-30 years of incubation, the Genetic Programming approach to strategy research and development has come of age. It is now entirely feasible to develop trading systems that far outperform the overwhelming majority of strategies produced by human researchers, in a fraction of the time and for a fraction of the cost.

SAMPLE GP SYSTEMS