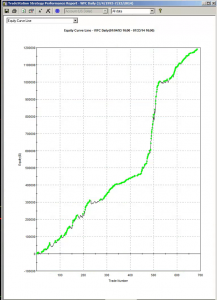

Below is the equity curve for an equity strategy I developed recently, implemented in WFC. The results appear outstanding: no losing years in over 20 years, profit factor of 2.76 and average win rate of 75%. Out-of-sample results (double blind) for 2013 and 2014: net returns of 27% and 16% YTD.

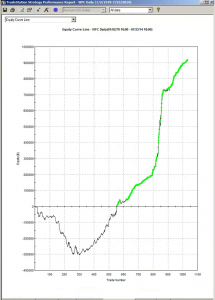

So far so good. However, if we take a step back through the earlier out of sample period, from 1970, the picture is rather less rosy:

Now, at this point, some of you will be saying: nothing to see here – it’s obviously just curve fitting. To which I would respond that I have seen successful strategies, including several hedge fund products, with far shorter and less impressive back-tests than the initial 20-year history I showed above.

That said, would you be willing to take the risk of trading a strategy such as this one? I would not: at the back of my mind would always be the concern that the market might easily revert to the conditions that applied during the 1970s and 1980’s. I expect many investors would share that concern.

But to the point of this post: most strategies are designed around the criterion of maximizing net profit. Occasionally you might come across someone who has considered risk, perhaps in the form of drawdown, or Sharpe ratio. But, in general, it’s all about optimizing performance.

Suppose that, instead of maximizing performance, your objective was to maximize the robustness of the strategy. What criteria would you use?

In my own research, I have used a great many different objective functions, often multi-dimensional. Correlation to the perfect equity curve, net profit / max drawdown and Sortino ratio are just a few examples. But if I had to guess, I would say that the criteria that tends to produce the most robust strategies and reliable out of sample performance is the maximization of the win rate, subject to a minimum number of trades.

I am not aware of a great deal of theory on this topic. I would be interested to learn of other readers’ experience.